User Story in Agile: A Practical Guide for Product Teams

Master the user story in Agile with our guide. Learn to write, split, and validate stories with templates, examples, and synthetic testing.

Most advice about a user story in agile starts and ends with the template. That’s too shallow to be useful.

A story isn't valuable because it says, “As a user, I want...” in the right format. It’s valuable when the team can use it to decide what to build, how to validate it, and whether it solved a real problem. Teams that treat stories like mini contracts usually create backlog theater: neat tickets, weak understanding, and too much rework after sprint review.

A stronger way to work is to treat each story as a testable hypothesis. The story captures a user goal, the team discusses what must be true for that goal to matter, and then they look for evidence. That evidence can come from prototypes, stakeholder review, acceptance tests, or synthetic testing before engineering starts. The point isn't perfect wording. The point is reducing uncertainty early.

That shift changes how you refine. Instead of asking, “Is this story written correctly?” ask, “Can design, engineering, and research act on this without guessing?” If the answer is no, the story isn't ready. If it can't be turned into a realistic mission for validation, it's still too vague.

Teams that want a cleaner starting point can use a simple user story template, but the template only helps when it leads to sharper conversations and clearer proof of value.

Beyond the Template What is a User Story in Agile

The common definition is incomplete. A user story in agile is not just a sentence pattern. It’s a compact way to express a user need so a cross-functional team can discuss it, challenge it, and confirm what “done” means.

User stories came from Extreme Programming, and Ron Jeffries formalized the 3 C's model, Card, Conversation, and Confirmation, as part of that practice. That thinking became integral to the 2001 Agile Manifesto, which is why stories were never meant to replace collaboration with documentation. They were meant to trigger it, as described in this overview of user stories in Agile and the 3 C's.

Why fixed stories slow teams down

When teams freeze a story too early, they usually lock in the wrong level of detail. The ticket becomes too broad for engineering, too rigid for design, or too abstract for QA. Then the sprint spends its energy resolving avoidable ambiguity.

A better story behaves like a working assumption:

It names a user and intent: enough to anchor prioritization.

It leaves room for learning: because the first draft is rarely the best draft.

It defines proof: so the team knows what success looks like before implementation starts.

Practical rule: If a story can't survive a hard conversation with design, engineering, and QA, it isn't a strong story yet.

What good teams actually do

Strong teams refine the same three things repeatedly across sprints:

The user goal

What is the person trying to accomplish right now, in context?The acceptance criteria

What observable conditions would prove this outcome works?The surrounding constraints

What edge cases, dependencies, risks, or usability issues matter enough to shape the work?

That’s why the template is only the front door. The actual work starts after the first sentence is written.

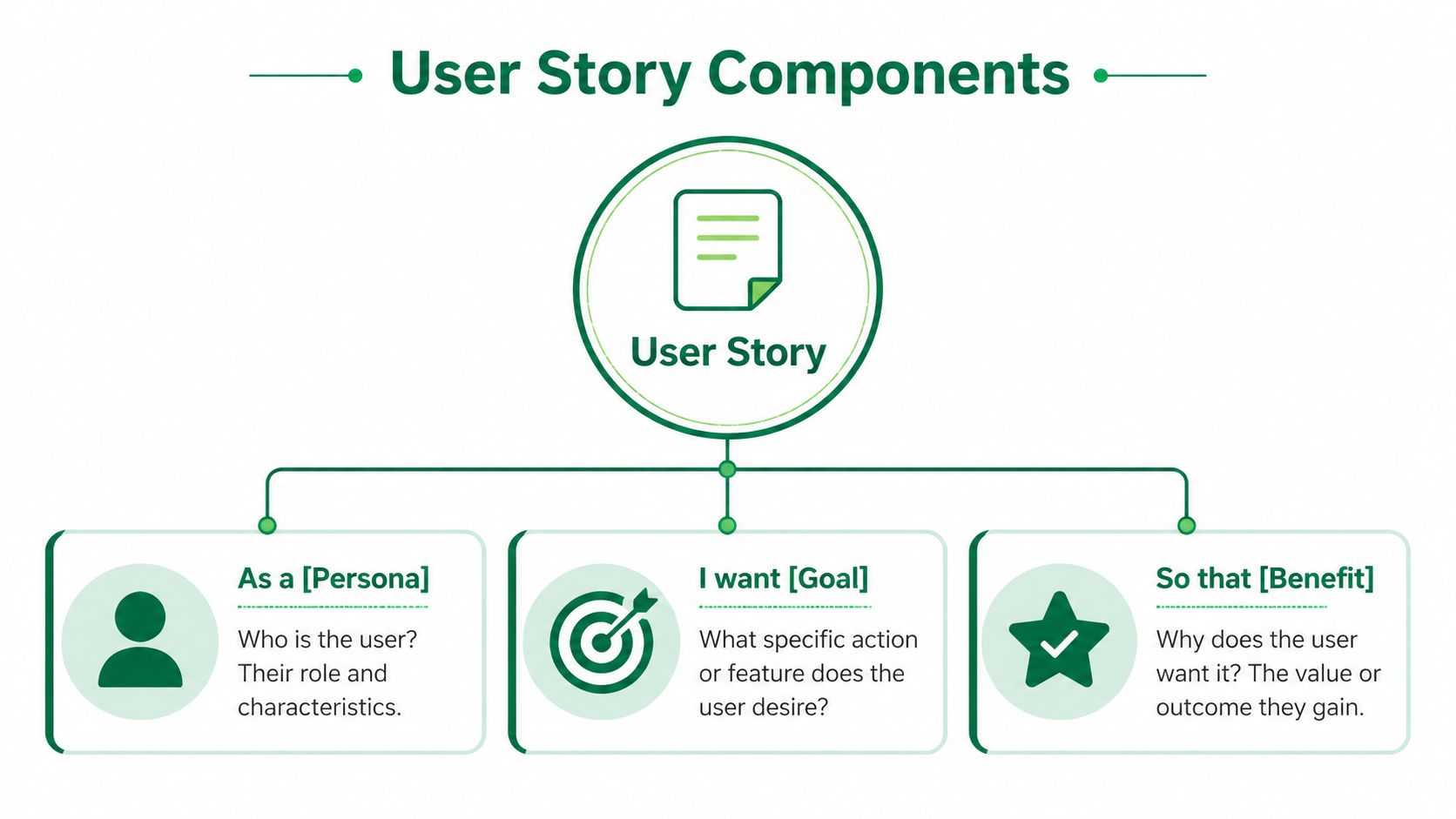

Deconstructing the Core Components of a User Story

Teams are familiar with the format:

As a [persona], I want [goal], so that [benefit].

That structure is useful because it forces clarity around three things: who needs something, what they need, and why it matters. But a story becomes effective only when the team treats that line as a prompt for deeper alignment.

Persona goal and benefit

The simplest way to improve story quality is to stop writing for “the user.” Generic actors create generic backlog items. A persona doesn't need to be elaborate, but it should be specific enough that the team can picture the situation.

Three checks help:

Persona: Is this a real user type with a distinct need?

Goal: Does the story describe an outcome, not a feature request disguised as one?

Benefit: Is the value meaningful enough to justify the work?

A weak story says, “As a user, I want advanced search.”

A stronger story says, “As a returning shopper, I want to narrow products quickly so I can compare relevant options without restarting my search.”

The second version gives design and engineering room to solve the problem, instead of forcing a UI choice too early.

The 3 C's in practice

Jeffries' model is still the most practical lens for story writing:

Card

The written story is a placeholder, not the complete requirement.Conversation

Product, design, and engineering use the story to surface assumptions, constraints, and trade-offs.Confirmation

The team agrees on testable acceptance criteria that show whether the story is done.

The card should be short. The conversation should be rich. The confirmation should be hard to misread.

That last part matters. Teams often write decent cards and hold decent conversations, then sabotage the work with weak confirmation. If acceptance criteria are vague, every role invents its own version of success.

The story as a social object

A useful story aligns people who think differently. Product cares about value, design cares about usability, engineering cares about feasibility, and QA cares about verifiability. The story gives them a shared object to refine together.

That’s why the text on the ticket should stay light. The richer detail belongs in the discussion and in the confirmation points that emerge from it.

How to Write Testable Stories with INVEST and Acceptance Criteria

Most backlog problems don't come from bad intentions. They come from weak refinement. According to Digital.ai’s 2025 State of Agile reporting cited in Atlassian’s guide to Agile user stories, 42% of respondents cite inadequate user story refinement as a top barrier to Agile success, with startups reporting 30% higher rates of story splitting failures, leading to a 25% loss in sprint velocity.

That’s why INVEST still matters. Not as a checklist you wave over tickets, but as a practical way to decide whether a story is ready for sprint planning.

Use INVEST as a gate not a slogan

Here’s what each letter should mean in day-to-day product work:

Independent

If the story can't move without three others landing first, planning gets messy fast. Some dependency is normal, but heavy coupling usually means the story is still too big or sliced in the wrong direction.Negotiable

A story should define the problem and the outcome. It shouldn't hard-code the solution unless a constraint requires it.Valuable

The value must be visible to a user, customer, or business outcome. If the benefit is invisible, it may belong as a task or technical story instead.Estimable

If engineering can't estimate it, they usually don't understand it yet. That’s not a failure. It’s a signal to refine.Small

Small means likely to fit comfortably inside a sprint, not merely short on paper.Testable

The team must be able to verify success without arguing about interpretation after the work is shipped.

From vague to valuable user story refinement

Vague Story (Before) | INVEST-Compliant Story (After) |

|---|---|

As a user, I want better checkout. | As a returning customer, I want to review delivery details before payment so that I can correct mistakes before placing my order. |

As a user, I want a smart dashboard with filters and charts. | As a sales manager, I want to filter pipeline results by team so that I can spot stalled deals during weekly review. |

As a user, I want invoice options. | As a mobile ticket buyer, I want to understand when an invoice will be issued so that I can choose the right payment path without confusion. |

Write acceptance criteria that prove behavior

Good acceptance criteria don't describe everything. They describe the minimum observable truths that must be present for the story to count as done.

A practical pattern is:

State the scenario

State the expected outcome

Include critical edge cases

Avoid prescribing implementation unless needed

For example, if the story is about saving a wishlist item, useful criteria might cover adding, viewing, and removing saved items. Weak criteria would say “build backend endpoint” or “use modal with icon button.” Those are implementation choices, not user outcomes.

Working advice: If QA, design, and product can each read the criteria and reach the same conclusion about success, the story is probably ready.

A defined testing path matters too. Teams using any form of user acceptance testing software tend to write cleaner acceptance criteria because they have to translate intent into something observable.

Keep the Definition of Done separate

Acceptance criteria answer, “Did this story solve the right problem?”

Definition of Done answers, “Did we complete the work to team standard?”

Don’t merge them. A story can meet its user-facing criteria and still fail the team's release expectations. Keeping those concepts separate prevents confusion during sprint review.

User Story Examples and Common Pitfalls to Avoid

A story often looks simple on the surface and hides a lot underneath. In practice, a single user story often encapsulates 30 or more technical requirements, and framing it in the user's business language helps teams reduce the kind of miscommunication that affects 70% of software projects while improving success rates by 2 to 3x, according to 3Pillar Global’s article on capturing technical details with user stories.

That’s why broad intent has to get sharper as evidence comes in.

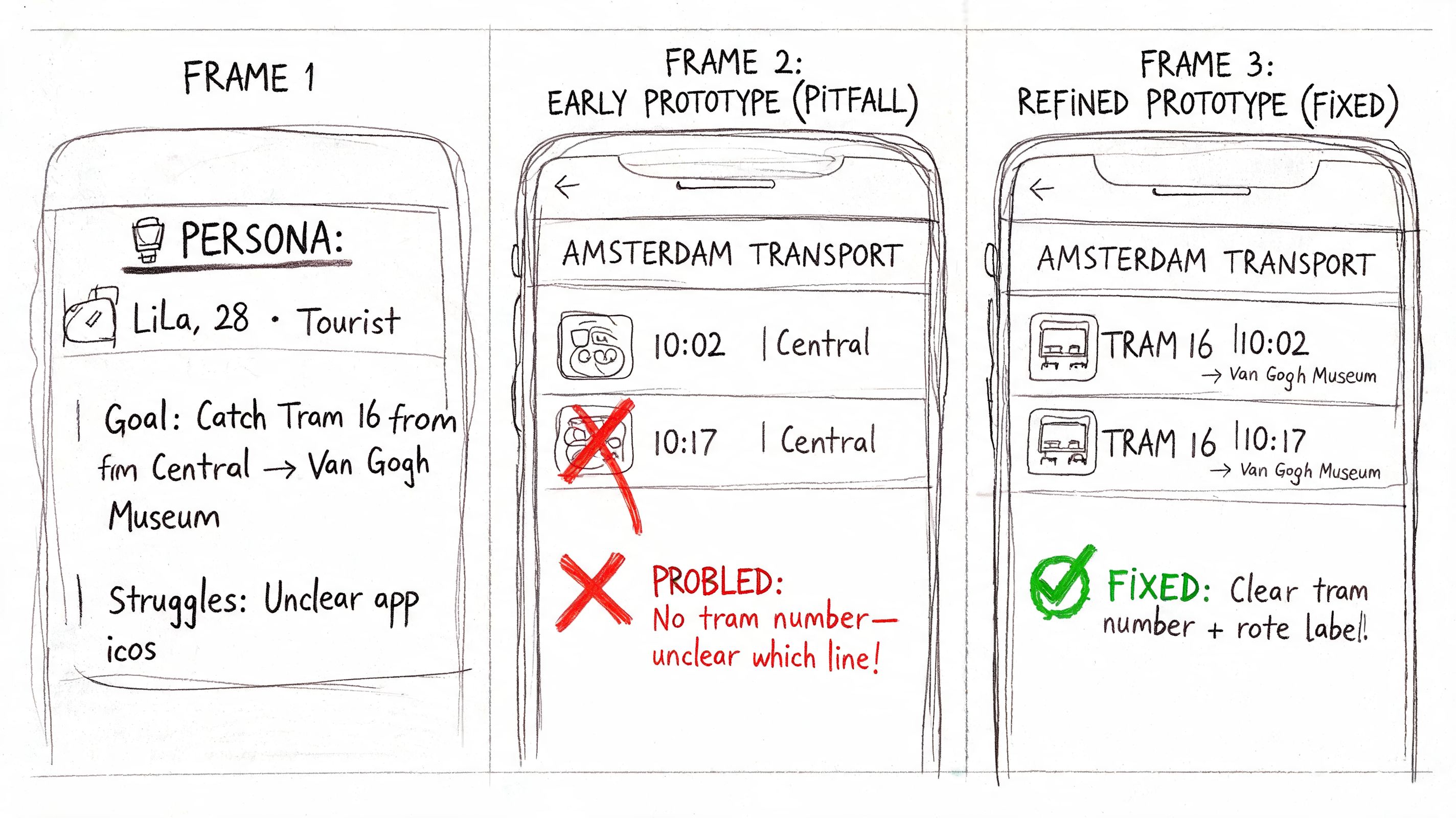

How a story evolves after review

Take a mobile transport flow. The first draft might be:

As a rider, I want to buy a ticket quickly on mobile so that I can start my trip without delay.

That’s a decent start, but it’s still broad. After prototype review, the team may discover that people hesitate on quantity controls, struggle with onboarding copy, and misread invoice behavior. The original intent remains valid, but the story is no longer precise enough.

A stronger backlog would split it into separate stories such as:

Touch target usability for ticket quantity controls

Clear payment and invoice copy during purchase

Explicit invoice behavior based on payment choice

That’s good refinement. The team didn't change the problem space. They reduced ambiguity.

What strong examples have in common

Better stories usually do three things well:

They describe the task from the user's perspective

Not from a system capability, component, or internal team preference.They isolate one meaningful outcome

One story, one clear purpose.They stay open on implementation

The team can still choose the right design or technical approach.

Broad intent is acceptable in discovery. It becomes a problem when it reaches sprint planning unchanged.

Common pitfalls that weaken backlogs

Some anti-patterns show up repeatedly:

The iceberg story

It looks small in Jira, but it hides too much scope. These stories usually contain multiple user goals and vague acceptance criteria.The technical chore story

It’s written as if the developer is the user, but there’s no visible user value. This work may still matter, yet it belongs in technical stories, tasks, or enabling work.The prescriptive story

It dictates UI patterns or architecture too early. That narrows the team's options before they’ve explored the problem.The story with no reason

If the “so that” clause is weak or missing, prioritization becomes political. The backlog fills with requests instead of outcomes.The approval-only story

Everyone signs off on it in a meeting, but nobody can validate it in use. These stories often pass planning and fail in reality.

The practical fix is simple. Write stories in user language, then force each one through a real validation lens.

How to Split Estimate and Prioritize User Stories

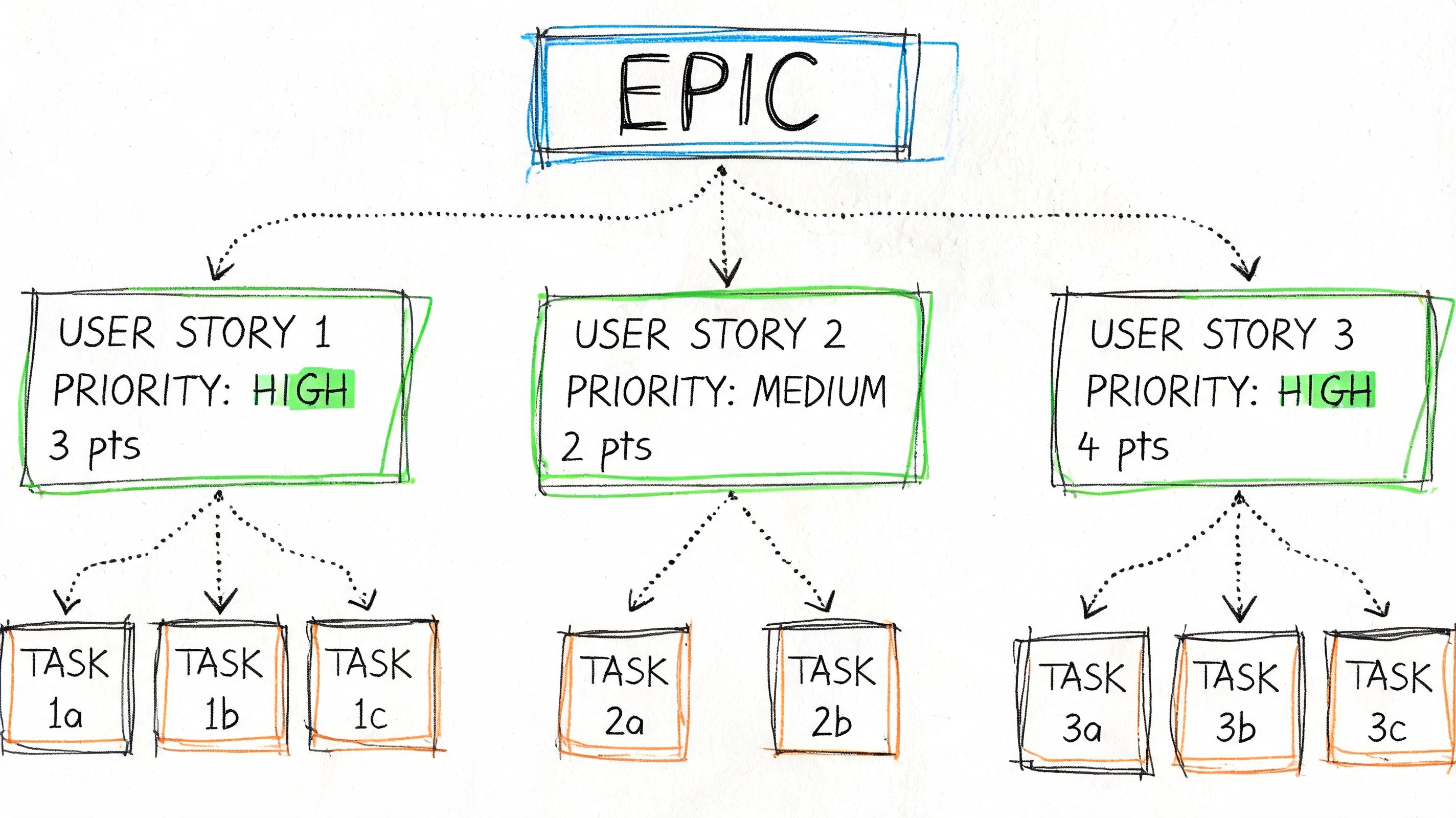

Backlogs become manageable when teams respect the hierarchy. An epic captures a larger user outcome. A story slices that outcome into a deliverable increment. Tasks describe the implementation work needed to complete the story.

Split by outcome not by function

Many teams split badly because they divide work by component. Frontend in one story, backend in another, API in a third. That creates dependencies without delivering anything usable.

A better method is vertical slicing. Each story should produce a thin but complete piece of value.

Useful split patterns include:

By user step

Search first, then compare, then purchase.By scenario

Happy path now, unusual cases after.By user type

New customer flow separate from returning customer flow.By business rule

One policy or condition at a time, if each can stand on its own.

If you're planning an MVP and need a sense of how those slices connect to architecture decisions, Buttercloud’s piece on scalable technical assets is a practical reference for thinking about what should be lightweight now versus built to support later growth.

Estimate for learning not for promises

Story points help teams compare effort and uncertainty. They are not time commitments in disguise.

Leading Agile’s analysis on how many user stories per sprint recommends teams commit to 5 to 15 user stories per sprint. It also notes that velocity is measured in story points, often 20 to 40 points per 2-week sprint, not raw story count, and that planning accuracy can improve from 60% to 85% within 3 to 5 iterations as teams calibrate.

That supports a few pragmatic habits:

Use planning poker or t-shirt sizing early

Not to be precise, but to expose assumptions.Re-estimate after meaningful refinement

If the story changed, the estimate should too.Prioritize by value and readiness together

A valuable story with unresolved ambiguity can still be the wrong sprint choice.

The healthiest backlog isn't the one with the most detail. It's the one where the next few stories are clear, small, and worth doing now.

Keep prioritization grounded

A product manager should ask four questions before a story enters sprint commitment:

Does this solve a real user problem?

Is the scope small enough?

Can the team estimate it with confidence?

Do we know how we'll verify success?

If any answer is no, refinement isn't finished.

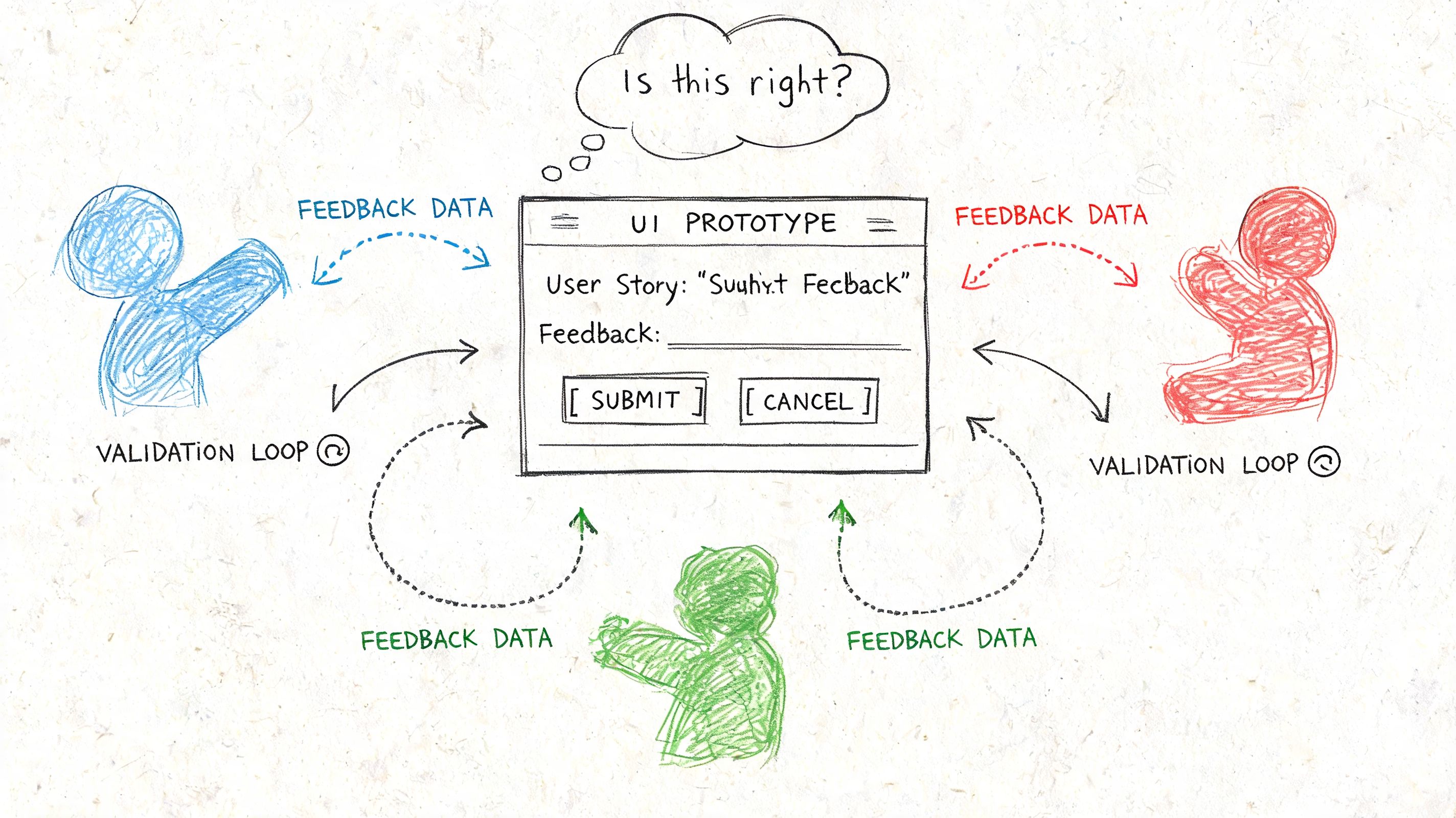

Validating Story Hypotheses with Synthetic User Testing

The biggest waste in agile isn't bad coding. It's building the wrong thing with confidence.

That’s why pre-development validation matters. If a story is a hypothesis, the team needs a fast way to pressure-test it before engineering spends the sprint implementing assumptions that should have been challenged earlier.

Turn the story into a mission

A practical validation loop looks like this:

Take the draft story.

Convert the goal into a realistic task or mission.

Put a prototype or flow in front of test participants.

Observe whether they can complete the task and where they hesitate.

Refine the story, criteria, or scope before it reaches development.

Synthetic testing changes the cadence. Instead of debating a ticket in backlog grooming for half an hour, the team can test whether the intent is understandable and whether the flow supports it.

One option is Uxia, which lets teams upload image or video prototypes, define a mission and audience, and run synthetic UX tests that return findings in roughly 10 to 15 minutes, based on the publisher brief for the platform. In practice, that makes it possible to validate a story within the same iteration rhythm used for planning and review, rather than pushing feedback into a later research cycle.

Where AI-backed story validation is heading

There’s a broader shift happening here too. An emerging trend is integrating AI-generated synthetic user stories and validation into backlogs. A Gartner projection says 35% of Agile teams will use AI for story creation and testing by 2027 to cut refinement time by 60%, as cited in NN/g coverage referenced here on user story mapping.

That doesn’t mean teams should hand story quality over to AI. It means product teams can use AI to generate inputs, test assumptions faster, and identify friction earlier.

The discipline still matters:

Human review is necessary because synthetic output can still miss strategic nuance.

Mission quality matters because vague prompts produce vague insight.

Backlog ownership stays with the team because prioritization is a product decision, not a model decision.

For a deeper look at the workflow, this guide to synthetic user testing lays out how teams can structure tests around realistic missions.

Here’s a useful walkthrough of the thinking in action:

Replace excessive documentation with fast evidence. Story quality improves when teams learn before they build.

From Static Requirements to Actionable Insights

A strong user story in agile is not a polished sentence sitting in a backlog. It’s a small, testable expression of user value that helps the team decide what to learn next.

That mindset changes everything. Stories stop acting like frozen requirements and start acting like decision tools. Refinement becomes less about wording and more about clarity. Acceptance criteria become less about formality and more about proof. Sprint planning gets easier because the team is choosing work it understands, not work it hopes will make sense later.

The teams that get the most from user stories shorten one loop above all others: the loop between an idea and evidence. They write less fiction into tickets, ask better questions in refinement, and validate earlier.

That’s where synthetic testing platforms fit naturally. They help teams turn a draft story into a mission, observe whether it holds up, and adjust before the expensive part of delivery begins.

If you want to shorten the path from story draft to validated feature, Uxia gives product teams a way to test prototype flows with synthetic users, capture friction quickly, and refine backlog items with evidence instead of opinion.