User Experience Researcher: A Complete 2026 Guide

Become a user experience researcher. Our 2026 guide covers the role, key methods, skills, and how AI tools like Uxia are shaping the future of UX.

A release goes live. The team is proud of the new flow, the UI looks polished, and the roadmap box is ticked. A week later, support tickets spike, conversion stalls, and nobody can agree on why. Design blames unclear requirements. Product blames execution. Engineering points out that everything worked as specified.

A user experience researcher closes this gap.

The job isn’t to add ceremony to product work. It’s to surface the friction, confusion, mistrust, and unmet expectations that teams usually discover too late. Good researchers stop teams from building confidently in the wrong direction. Great researchers do that while keeping the work practical, fast, and tied to product decisions.

What Is a User Experience Researcher

A user experience researcher studies how people use products, what they expect to happen, where they get stuck, and why they make the choices they do.

That sounds simple. In practice, it cuts through a lot of expensive guesswork.

The role in plain terms

If a product manager asks, “Will users want this?”, a researcher helps answer that before launch.

If a designer asks, “Why is this step failing?”, a researcher investigates the behaviour behind the drop-off.

If leadership asks, “What should we fix first?”, a researcher turns scattered observations into a clear priority.

That’s why I think of the role less as a test runner and more as a detective for user behaviour. The output isn’t raw data alone. It’s evidence that helps a team make better calls.

A strong researcher usually works across several activities:

Discovery work to uncover needs, pain points, and unmet expectations

Evaluative work to test concepts, prototypes, and live experiences

Synthesis to turn messy findings into patterns and decisions

Stakeholder alignment so research changes the roadmap instead of dying in a slide deck

Why the role matters more now

This isn’t a niche function any more. In 2026, 82% of companies surveyed by Maze have at least one dedicated UX researcher on staff (Maze). That tells you two things. First, product teams increasingly see research as operationally necessary. Second, organisations that still rely only on intuition are falling behind more disciplined teams.

For anyone trying to understand the day-to-day craft, Uxia’s overview of user experience research is a useful starting point because it frames research as an ongoing product capability, not a one-off workshop.

Good UX research doesn't exist to prove a team right. It exists to reveal where the team is wrong early enough to do something about it.

What a researcher is not

A user experience researcher isn’t just a note-taker in interviews. They aren’t a usability-only specialist. They also aren’t there to produce decorative artefacts with no connection to delivery.

What works is research tied to decisions:

Product question | Researcher contribution |

|---|---|

Should we build this? | Clarifies whether the problem is real and for whom |

Why are users failing here? | Identifies the behavioural and contextual causes |

What should we improve first? | Prioritises issues by impact and recurrence |

How do we persuade stakeholders? | Brings evidence into strategy discussions |

The profession sits at the intersection of design, product, business, and behaviour. That’s why the best user experience researchers are useful long before a usability test starts and long after one ends.

The Strategic Mission of a UX Researcher

The strategic job of a UX researcher is to de-risk product decisions.

That’s the simplest and most useful way to explain the role to a product manager. Not “they run interviews”. Not “they own insights”. They reduce the odds that a team ships the wrong thing, solves the wrong problem, or scales a flawed experience.

Research protects product bets

Teams usually fail in familiar ways. They over-trust internal opinions. They test too late. They confuse what users say with what users do. They collect feedback but don’t connect it to business choices.

A good researcher creates discipline around those weak points.

They ask questions like:

What assumption are we betting on?

What evidence would change our direction?

Are we diagnosing a usability issue, a value problem, or a trust problem?

Who is struggling most, and in what context?

Those questions matter because product work is full of irreversible costs. Once engineering starts building into the wrong flow, every revision gets slower and more political.

The business case is already there

There’s a reason mature teams invest in research. Every $1 invested in UX research yields a $100 return (9,900% ROI), according to the Forrester benchmark cited by Adobe (Adobe). You don’t need to take that as a promise for every project. You should take it as a strong signal that informed product decisions have material business value.

That value shows up in several places:

Fewer bad launches because teams validate assumptions earlier

Cleaner prioritisation because issues are ranked by user impact, not internal volume

Better retention and trust because friction is caught before it becomes habit

Less rework because teams understand the problem before polishing the solution

Practical rule: If research starts after design sign-off, the team is probably using it as QA, not strategy.

Where researchers exert influence

The strongest user experience researchers don’t wait for a brief that says “please run a study”. They shape how decisions get made.

That often means playing three roles at once.

Translator

Researchers translate between user behaviour and product language. A participant may describe anxiety, hesitation, or confusion. The team needs that reframed into design implications, risk areas, and next actions.

Challenger

Research is one of the few functions allowed to challenge momentum with evidence. That matters because velocity without correction isn’t progress. It’s just faster waste.

Prioritiser

A backlog is full of requests. A researcher helps the team see which issues are systemic, which are local, and which are symptoms rather than root causes.

Here’s what usually works better than “run more research”:

Weak approach | Better approach |

|---|---|

Test the whole product because stakeholders want confidence | Test the riskiest assumption in the flow |

Ask users if they like it | Observe whether they can use it and why they hesitate |

Present every finding equally | Separate critical blockers from minor polish issues |

Share a report once | Bring insights into planning, design reviews, and roadmap trade-offs |

The mission is strategic because the work changes decisions, not just documents them. That’s why the best researchers are often valued less for collecting data and more for helping a team act on the right evidence at the right time.

Essential UX Research Methods and Techniques

Methods matter, but method choice matters more.

A lot of weak research comes from using a familiar tool for the wrong question. Teams run surveys when they need observation. They book interviews when they need task-based testing. They recruit a handful of people and expect market strategy. The craft is in matching the method to the decision.

Two distinctions that keep your research honest

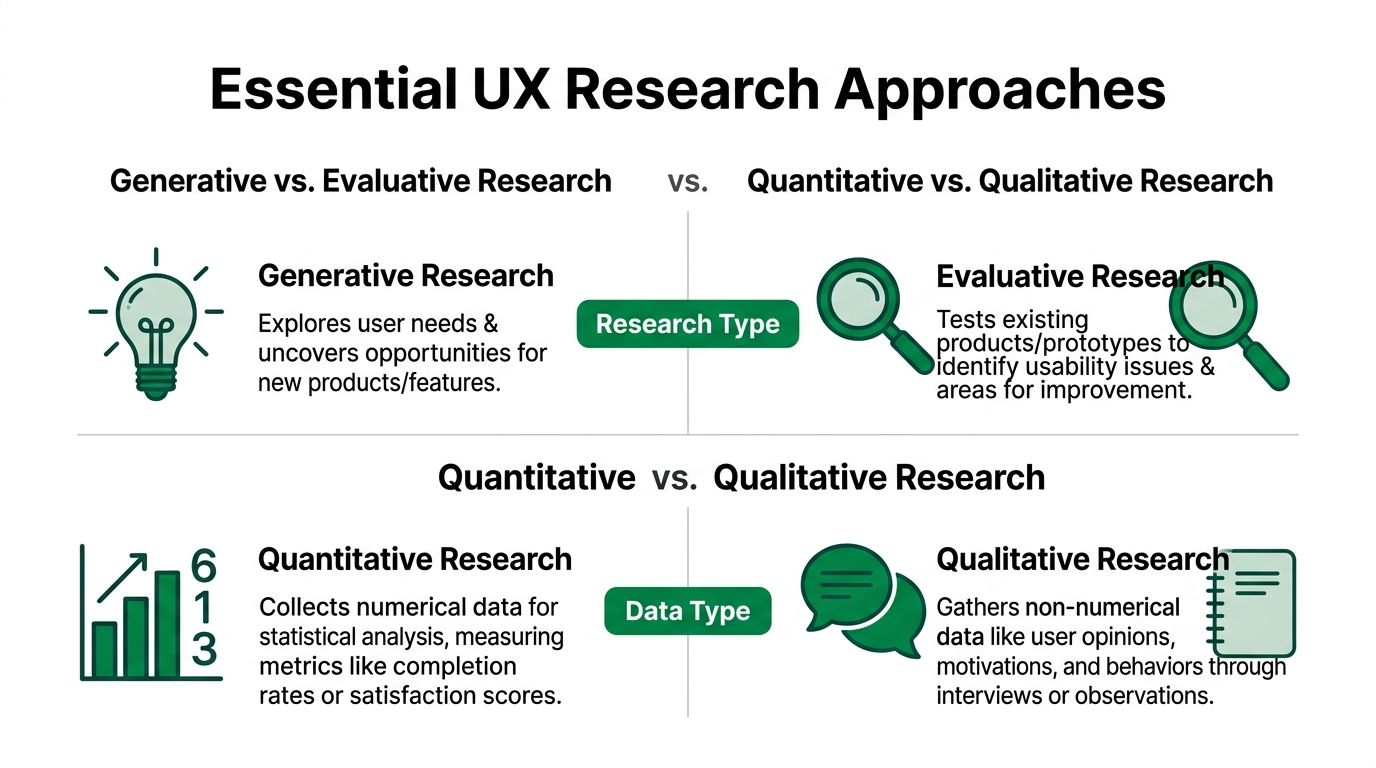

The first distinction is generative versus evaluative.

Generative research helps a team understand unmet needs, workarounds, and mental models before a solution is defined. Evaluative research tests whether an existing concept, prototype, or live flow works.

The second is quantitative versus qualitative.

According to Dovetail, UX researchers need a strategic mix of quantitative methods that measure patterns and qualitative methods that explain motivations and behaviour, and modern platforms can support both by capturing think-aloud transcripts alongside metrics such as completion rates and error frequencies (Dovetail).

That combination matters because a dashboard can tell you what happened. It usually can’t tell you why.

What to use and when

Here’s a practical view of the main options.

Method | Best for | What it gives you | Common failure mode |

|---|---|---|---|

User interviews | Discovery, mental models, unmet needs | Rich context, language, motivations | Asking hypothetical questions and treating opinions as behaviour |

Usability testing | Task performance and friction | Observable problems in flows and UI | Turning it into a preference interview |

Surveys | Broad input and pattern checks | Reach across many users, directional signals | Asking vague questions that produce vague answers |

Analytics review | Behavioural trends in live products | Drop-offs, paths, feature usage | Assuming causation from patterns alone |

Diary studies | Longer-term habits and context | Behaviour over time | Poor setup that creates thin or inconsistent entries |

Traditional methods still matter

Interviews are still one of the most useful tools in the stack. They’re especially valuable when a team doesn’t understand user context well enough to define the right problem.

Usability testing is the workhorse for product teams because it reveals where users hesitate, misread, or lose trust during tasks. It’s often the fastest way to find friction in a prototype before engineering invests heavily.

Surveys can help when you need breadth, but they’re often overused. If the team needs explanation rather than reach, interviews or moderated sessions usually outperform them.

If you’re building that capability, this guide on how to analyze interview data is useful because the true value of interviews comes after the calls, when themes need to be coded, grouped, and translated into decisions.

Where AI-driven testing changes the workflow

Traditional research has obvious constraints. Recruiting takes time. Scheduling slows down momentum. Live sessions create operational overhead. And by the time findings are consolidated, the team has often moved on.

That’s where synthetic and automated workflows are starting to reshape the role.

One option is Uxia’s guide on how to conduct user interviews, which sits alongside a broader AI testing approach where teams upload designs, define a mission, and review synthetic participant behaviour, think-aloud output, and issue patterns without a conventional recruiting cycle.

This doesn’t replace every traditional method. It changes the trade-offs.

Where synthetic testing helps

Early validation: Teams can probe obvious friction before spending time on live recruitment.

Iteration speed: Designers can test multiple flows quickly instead of waiting for the next formal study.

Coverage: Product teams can review repeated patterns across screens and tasks in a more continuous rhythm.

Where human research still wins

Sensitive domains: Trust, emotion, stigma, and complex decision contexts still need human nuance.

Deep generative work: If you’re exploring unmet needs or organisational workflows, direct human conversation is often irreplaceable.

Stakeholder credibility in some environments: Some teams still need to hear real user language live before they’ll act.

Fast research is only useful if the team still asks a serious question. Speed doesn't rescue a vague brief.

The practical answer isn’t “old methods or AI”. It’s a layered approach. Use lightweight and automated methods to catch obvious friction early. Use live qualitative research when the problem is ambiguous, high-stakes, or emotionally complex.

That’s what competent researchers do. They don’t defend a method. They choose one.

Key Deliverables and Research Outputs

Research only becomes useful when someone else can act on it.

A user experience researcher doesn’t stop at collecting observations. The work has to be turned into outputs that help designers revise, product managers prioritise, and stakeholders align around the same reality.

The difference between notes and deliverables

Raw notes are not a deliverable. A transcript is not a deliverable. A wall of sticky notes isn’t a deliverable either, unless the team can use it to decide something concrete.

Good outputs do three things:

Reduce ambiguity

Point to action

Travel well across teams

That means the artefact has to match the audience. A product manager may need prioritised issues and decision implications. A designer may need interaction-level evidence. Leadership may need patterns and risk framing, not session detail.

The outputs teams use

Some deliverables keep proving their value because they help different functions work from the same understanding.

Usability findings report

This is usually the fastest route from observation to action. It summarises what users tried to do, where they failed, how severe the issue appears, and what evidence supports the recommendation.

What works is a short report with clips, quotes, screenshots, and ranked issues.

What doesn’t work is a dense deck with every observation treated as equally important.

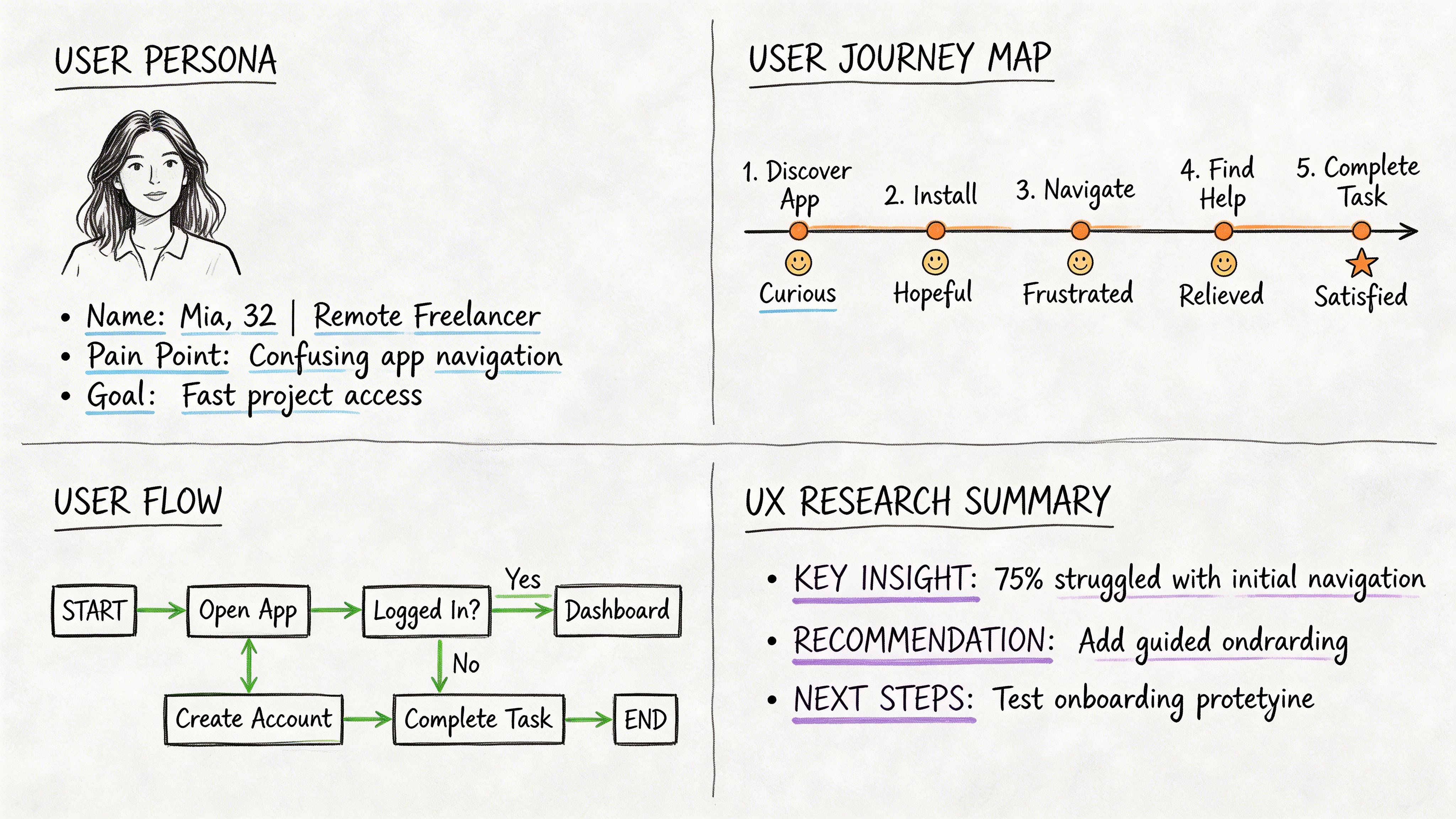

Persona or behavioural segment

A good persona isn’t fiction with a stock photo. It’s a focused summary of patterns that matter for product decisions, such as goals, constraints, trust triggers, and context of use.

If the persona doesn’t change design choices, it’s probably too generic.

Journey map

Journey maps are useful when pain points span channels, teams, or moments in time. They help people see that a broken experience often starts before the screen they own and continues after it.

This is especially valuable when product teams optimise isolated steps and miss the broader workflow.

A short visual example helps here:

A simple flow from data to action

Most strong deliverables follow a sequence like this:

Collect evidence through sessions, tasks, observations, or behavioural data.

Cluster patterns so repeated issues become visible.

Interpret impact by asking what this means for task success, trust, or comprehension.

Translate into guidance that design and product can act on.

Socialise the findings in meetings where priorities are set.

A research report should make the next decision easier, not prove how much work the researcher did.

How modern tools are changing outputs

The mechanics of documentation are shifting. Tools now help generate transcripts, summarise common issues, and package evidence more quickly than manual workflows used to allow.

That’s useful, but only if the researcher still does the hard part. Synthesis. Judgement. Prioritisation. Narrative.

A strong deliverable today might include:

Output element | Why it matters |

|---|---|

Session excerpts | Gives stakeholders direct evidence |

Prioritised issue list | Helps teams decide what to fix first |

Heatmaps or interaction patterns | Makes friction visible at a glance |

Summary narrative | Connects isolated findings into one product story |

Recommended actions | Prevents “interesting, but now what?” reactions |

The best research outputs aren’t impressive because they’re polished. They’re impressive because they change what the team does next.

Skills and Qualities of a Successful UX Researcher

Tools can accelerate collection and summarisation. They can’t replace judgement.

That’s why the strongest user experience researchers combine method competence with strong interpersonal and strategic skills. If either side is weak, the work suffers. A technically precise researcher who can’t influence stakeholders gets ignored. A persuasive storyteller without methodological rigour can mislead a team with confidence.

Hard skills that still matter

At the practical level, researchers need to know how to design and run sound studies.

That includes:

Research design: choosing the right method for the question

Interviewing and moderation: guiding sessions without leading participants

Usability testing: observing behaviour, not just collecting opinions

Analysis and synthesis: finding patterns without flattening nuance

Insight writing: turning evidence into decisions teams can act on

These aren’t glamorous skills, but they are foundational. A lot of poor research falls apart because the questions were weak, the tasks were biased, or the findings were over-claimed.

Soft skills are becoming more valuable

As collection gets faster, the skills around meaning and influence matter more.

Curiosity

Strong researchers don’t stop at the obvious answer. If a participant says, “I didn’t trust this page,” curiosity pushes into what triggered that response. Was it the copy, the visual hierarchy, the missing reassurance, or prior experience with similar products?

Empathy

Empathy is often misunderstood as being nice. In research, it’s closer to disciplined perspective-taking. It helps you understand the user’s context without projecting your own assumptions onto it.

Storytelling

A finding that isn’t understood won’t shape anything. Researchers need to explain what happened, why it matters, and what the team should do next. Clearly.

Stakeholder management

Many otherwise capable researchers struggle with this. You can run a strong study and still lose impact if the right people weren’t involved early, or if the findings arrive after decisions have already hardened.

The most influential researcher in the room isn't always the one with the deepest dataset. It's often the one who can connect evidence to a decision the team needs to make this week.

What good looks like in practice

A capable researcher tends to show a few consistent habits:

They frame questions tightly. They know what decision the study is meant to support.

They separate observation from interpretation. That keeps analysis cleaner.

They resist overclaiming. They know the limits of each method.

They adapt communication by audience. Designers, PMs, and executives rarely need the same level of detail.

How to build these skills deliberately

If you’re early in the field, don’t focus only on learning tools. Learn how to ask sharper questions and make tighter recommendations.

A useful way to practise is to review any product flow and write three things:

The assumption the team seems to be making.

The evidence you’d need to validate or challenge it.

The decision that evidence should influence.

That exercise strengthens both research design and product thinking. It also mirrors where the profession is heading. As automation handles more of the mechanical work, the researcher’s edge comes from interpretation, facilitation, and strategic clarity.

Building Your UX Research Career and Portfolio

Many individuals enter UX research from somewhere else. Psychology, design, content, academia, customer support, analytics, product, journalism. That’s normal.

What matters isn’t having one approved background. It’s showing that you can investigate product questions rigorously, synthesise what you learn, and turn it into useful guidance.

How the career usually develops

UX research careers tend to mature through a clear competence progression. According to UX Design, researchers move from foundational execution at the junior level to program-level synthesis and strategic framing at the senior level, typically across a 3-5 year career development trajectory (UX Design).

That progression is a helpful benchmark because it reminds people not to rush the wrong milestones.

Stage | Typical focus | What proves growth |

|---|---|---|

Junior | Execution, logistics, note-taking, basic reporting | Reliable study setup and accurate observation |

Mid-level | Insight generation and method selection | Connecting findings to design and product choices |

Senior | Program thinking, alignment, storytelling | Shaping roadmaps, frameworks, and shared understanding |

What to put in a portfolio

A good portfolio doesn’t need brand-name companies. It needs evidence of thought.

The most convincing case studies usually show:

The problem: what question needed answering

The method choice: why that approach fit the problem

The evidence: what you observed or gathered

The interpretation: what patterns mattered

The outcome: what changed because of the research

What hiring managers often want isn’t a beautiful deck. They want to see whether you can think like a researcher.

If you have no formal experience yet

You can still build strong portfolio material.

Audit an existing product

Pick a real app or website. Define a realistic research question. Run a small interview or usability project with people in the target audience. Then document your method, findings, and recommendations.

Rework a weak flow

Choose a journey with visible friction, such as checkout, onboarding, or password reset. Study the flow, collect observations, identify trust and comprehension issues, and show how you’d improve it.

Compare methods, not just findings

A useful portfolio piece can include a section on what you’d do differently with more time, budget, or access. That shows maturity. It tells employers you understand trade-offs.

Skills that make candidates stand out now

The market is shifting, so the portfolio should reflect that. If your work only shows classic moderated sessions and generic empathy maps, it can look dated.

Show that you understand modern workflows:

Mixed-method thinking

Fast validation cycles

Evidence prioritisation

AI-assisted synthesis and testing

Clear communication for PMs and designers

You don’t need to pretend machines do the whole job. They don’t. But you should show that you can work effectively in teams where automation speeds up collection and first-pass analysis.

Practical moves that help

Some advice sounds good but doesn’t produce much. “Network more” is one of those phrases. Better moves are concrete.

Redo one real product problem end to end: This teaches you more than collecting certificates.

Write concise readouts: Hiring managers notice candidates who can explain findings without hiding behind jargon.

Practise moderation: Even informal sessions with volunteers improve your listening fast.

Build a repeatable template: A simple study plan, discussion guide, and findings format makes your work easier to review.

Learn to present trade-offs: Teams rarely need perfect certainty. They need a sensible recommendation with clear limits.

Your portfolio should show how you think under constraints, not just what methods you know by name.

A junior researcher gets hired for execution potential. A mid-level researcher gets trusted for judgement. A senior researcher gets pulled into strategic conversations because they can align people around what matters.

If you build your portfolio with that arc in mind, it will read like a career in motion, not a pile of isolated exercises.

The Future of Research with AI and Automation

A product team ships a new onboarding flow on Tuesday. By Wednesday, the PM wants to know where users are hesitating, whether the copy is clear, and if the final step is causing drop-off. A traditional study can answer that, but not at the speed many teams now expect. That timing gap is exactly why AI is changing UX research practice.

The role is not getting smaller. It is getting more selective about where human judgment matters.

AI now handles more of the setup work that used to consume research time. Teams can generate synthetic test sessions, review probable friction points in a prototype, cluster repeated reactions, and produce a first-pass summary before a researcher has even written the readout. Tools such as Uxia are pushing that shift further by making AI-supported user research workflows part of regular product iteration rather than a special project.

That changes the job in a practical way. Researchers spend less time recruiting for every simple check, tagging the same patterns by hand, or waiting two weeks to confirm that a form field is confusing. They spend more time deciding whether the signal is trustworthy, where synthetic testing is enough, and where direct user contact is still required.

That distinction matters.

AI is useful for speed, coverage, and early pattern detection. It is weaker at explaining sensitive motivations, social context, edge-case behavior, and anything shaped by trust, power, or emotion. A synthetic participant can flag a likely usability issue. It cannot fully represent the anxiety of a patient reading medical results or the caution of a finance user making a high-risk transfer. Senior researchers will keep their value by knowing which question belongs to which method.

The strongest teams already combine both lanes of evidence:

Use AI testing for rapid checks on flows, copy, hierarchy, and prototype friction

Use human research for behavior that depends on context, stakes, memory, or emotion

Compare synthetic findings against live user evidence before making high-confidence product decisions

Treat automated summaries as drafts that still need review, prioritization, and interpretation

Hiring is shifting with the work. Managers are looking for researchers who can run studies and also judge automated outputs. If you are presenting that skill set in applications, Should I Use AI to Write My Resume? is a practical reference on using AI as support without letting it flatten your actual experience.

A future-ready UX researcher does three things well. They frame the right question, choose the right level of evidence, and help the team act on imperfect information. That is harder than manual execution alone, and more valuable.

AI reduces time spent on repetitive research tasks. It increases the premium on judgment, synthesis, and product sense.

The next version of this profession looks more strategic, not less. Researchers who know how to work with AI tools like Uxia will cover more ground, test earlier, and spend more of their time where product teams need them most. Making sense of ambiguity, challenging false confidence, and turning fast signals into sound decisions.