Synthetic Testers: AI-Driven UX Feedback to Accelerate Design

Discover how synthetic testers transform UX research with AI personas, delivering fast, scalable insights to build better products.

What if you could test a new feature with thousands of different users, all in the time it takes to grab a coffee? What if you could do it before your developers even wrote a single line of code? This isn't science fiction anymore. It's the new reality that synthetic testers are bringing to UX research. These AI-powered personas simulate real human behaviour, giving you immediate, actionable feedback and smashing the bottlenecks that have held product teams back for years.

The New Reality of UX Research

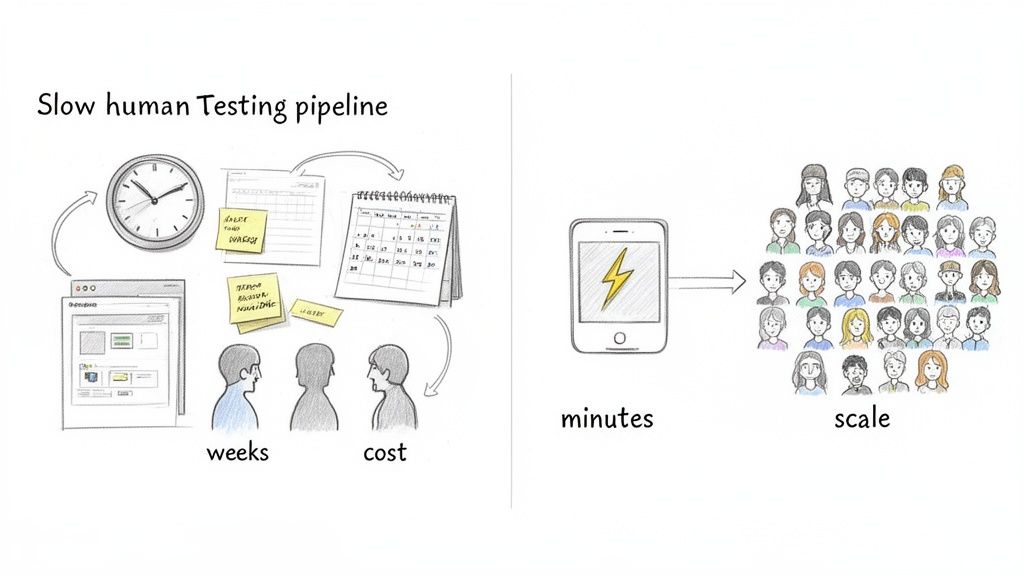

For too long, designers and product managers have been stuck in a frustrating cycle. Getting user feedback has always been slow and expensive. Traditional human testing means a long slog of recruiting participants, scheduling sessions, handling payments, and moderating the tests themselves. This whole process can drag on for weeks, stalling development and putting a brake on innovation.

This friction isn't just an annoyance. It’s a genuine obstacle. It means critical feedback often lands on your desk far too late in the game. You're then forced into costly post-launch fixes or, worse, you ship a product with glaring usability flaws you never even knew existed.

A Strategic Shift in Feedback

Synthetic testers represent a complete break from these old limitations. Instead of waiting around for a handful of human testers to become available, you can now unleash an army of AI agents to analyse your designs in an instant.

And these aren't just simple bots running through a pre-written script. They are sophisticated AI personas, meticulously designed to mimic the cognitive processes and behaviours of your specific user segments.

Think of them as digital method actors. They can "see" your UI, "think" about the goals you've given them, and "articulate" their entire experience as they navigate your product, just like a real person would in a think-aloud study.

Platforms like Uxia are making this powerful technology accessible to teams of all sizes. By bringing synthetic testers into your workflow early and often, you can gain a massive strategic advantage.

Accelerate Timelines: Squeeze testing cycles that used to take weeks into a matter of minutes. This enables true continuous iteration.

Scale Your Research: Test with thousands of unique personas to uncover widespread usability patterns and critical edge cases that small human panels would almost certainly miss.

Reduce Costs: Completely eliminate the overheads tied to participant recruitment, scheduling, and paying incentives.

The real power of synthetic testers is their ability to provide a constant, streaming flow of feedback. You can test a new idea in the morning, push the changes in the afternoon, and re-test the whole thing before you clock off for the day.

This transforms UX research from a slow, periodic event into a fast, integrated part of your daily design and development rhythm.

Practical Recommendation: A great way to get started is to run a synthetic test on a design you've already tested with humans. This lets you directly compare the AI-generated insights from a platform like Uxia with your existing findings. It’s a fantastic way to build confidence and see the value for yourself.

Ultimately, synthetic testers aren't just another tool to add to your stack. They represent a new way of working—one that empowers product teams to build far better products with an efficiency that was previously unimaginable. They handle the repetitive, time-sucking parts of usability analysis, freeing up your human experts to focus on the strategic, high-impact work that truly matters.

What Exactly Are Synthetic Testers?

So, what exactly are synthetic testers? Let's cut through the jargon.

The easiest way to picture a synthetic tester is to think of it as a highly trained ‘digital actor’. You hand this actor a detailed character sheet and a specific mission to carry out on your website or app.

Imagine you need to test a new online banking sign-up flow. Instead of spending days recruiting a real person, you can deploy a synthetic tester in minutes using a platform like Uxia. You might define its persona as ‘Javier, a 45-year-old small business owner from Madrid who is tech-savvy but cautious about digital security.’ Its mission? ‘Open a new business account and fund it with an initial deposit.’

This is where you see their real power. These aren’t just simple bots that blindly click through a pre-defined script. They are advanced AI agents that can actually perceive, think, and interact with a user interface just like a human would. They analyse the layout, read the text on the screen, and make decisions to navigate the flow based on their assigned persona and goal.

From Persona to Actionable Feedback

As the synthetic tester works through its mission, it generates a continuous stream of qualitative feedback. It essentially 'thinks aloud', verbalising its thought process in real time. You’ll see it articulate points of confusion, moments of delight, and areas where friction brings its progress to a halt.

At its core, a synthetic tester is like an advanced AI survey answer generator that simulates not just clicks, but the cognitive and verbal reasoning behind those clicks. It’s this ability to explain the 'why' behind its actions that delivers such rich usability insights.

This goes far beyond simple click-tracking. Using a platform like Uxia, the entire interaction is captured and processed instantly. What you get isn't just a pass-or-fail report; it's a rich dataset that includes:

Full session transcripts detailing the AI's "think-aloud" process.

Interaction heatmaps showing exactly where the synthetic user focused its attention.

Summarised usability reports that flag critical issues and patterns automatically.

This gives your team an incredibly powerful first line of defence against poor user experiences. You can catch and fix major usability problems long before a real customer ever gets frustrated, saving a huge amount of time and money.

Synthetic Testers vs. Traditional Human Testers

To put it all into perspective, it helps to see a direct comparison. While both aim to improve usability, their methods, speed, and costs are worlds apart.

Here’s a quick breakdown:

Aspect | Synthetic Testers (e.g., Uxia) | Traditional Human Testers |

|---|---|---|

Speed | Results in minutes | Takes hours to days |

Cost | Low, fixed subscription | High, per-tester fees |

Scale | Unlimited tests, easily scalable | Difficult and expensive to scale |

Data Privacy | Fully anonymous, GDPR-compliant | PII management, GDPR risks |

As you can see, synthetic testing isn't just a minor improvement—it fundamentally changes the economics and logistics of user research.

The Growing Importance in Europe

The shift toward synthetic technologies is picking up speed across Europe. Germany is leading the way with its Industrie 4.0 initiatives, where major companies are investing heavily in AI automation and digital twins to perfect their user interfaces.

This trend is also being shaped by data privacy rules like GDPR, which make generating realistic, anonymised data more critical than ever. Before the AI Act, only 30% of EU startups could afford to run continuous testing. Now, platforms like Uxia are making this practice accessible to businesses of all sizes, allowing them to compete on a global stage. The European synthetic data market is set for massive growth, and you can explore more about these trends in a deeper analysis of the synthetic data market.

How Synthetic Testers See and Think

To really get what makes synthetic testers tick, it helps to pop the bonnet and see how they actually work. It’s not just one single piece of AI; it’s a fascinating pipeline of different technologies working in concert to mimic human thinking and behaviour. The whole setup is designed to turn your static designs into dynamic, qualitative feedback, fast.

It all starts with your design. You can feed the system a Figma prototype, a simple screenshot of your UI, or even a live URL. This becomes the world the synthetic tester will explore.

Once the design is in, the AI pipeline gets to work.

The Power of Computer Vision

First up, a sophisticated computer vision model acts as the AI’s ‘eyes’. It scans the user interface you’ve provided, carefully analysing its structure and content. It doesn’t just see a flat picture; it identifies and labels every single interactive element on the screen.

This means it can tell the difference between:

Buttons and links a user can click on.

Text fields and forms where someone might type.

Images and icons that give context or guide navigation.

Headings and body text that deliver information.

This first pass creates a structured map of the interface, which is crucial for what comes next. The AI now knows what’s on the screen and what it can interact with, much like how a person quickly scans a new webpage to get their bearings.

Crafting a Cognitive Model with LLMs

With the UI fully mapped out, the next piece of the puzzle comes in: a large language model (LLM). This is where the ‘digital actor’—the persona we talked about—is brought to life. The LLM is given a specific set of demographic and behavioural traits, creating a unique cognitive model for that synthetic tester.

For instance, you might set up a persona like: ‘A 25-year-old student who is budget-conscious and easily distracted by promotional offers.’ This simple instruction shapes the AI's "mindset," dictating its goals, priorities, and even its biases.

This goes way beyond simple scripting. The persona isn't just a label; it's an active cognitive framework that dictates how the AI will interpret the UI and make decisions. This is what allows synthetic testers to simulate the nuanced, and sometimes unpredictable, nature of real users.

A platform like Uxia is brilliant at managing this. Inside Uxia, you can easily build these detailed personas by combining different attributes. This lets you create hundreds of distinct cognitive models to test your designs against, making sure the feedback you get actually reflects the diverse spectrum of your target audience.

Navigating and Thinking Aloud

Now for the magic. The AI persona ‘looks’ at the vision model’s map of the UI and decides what to do next based on its assigned goal. If its mission is to ‘find the return policy’, the AI will start looking for elements that seem relevant, like ‘Help’, ‘Support’, or ‘FAQ’ links.

As it navigates, it generates a real-time ‘think-aloud’ commentary, explaining its choices, assumptions, and frustrations.

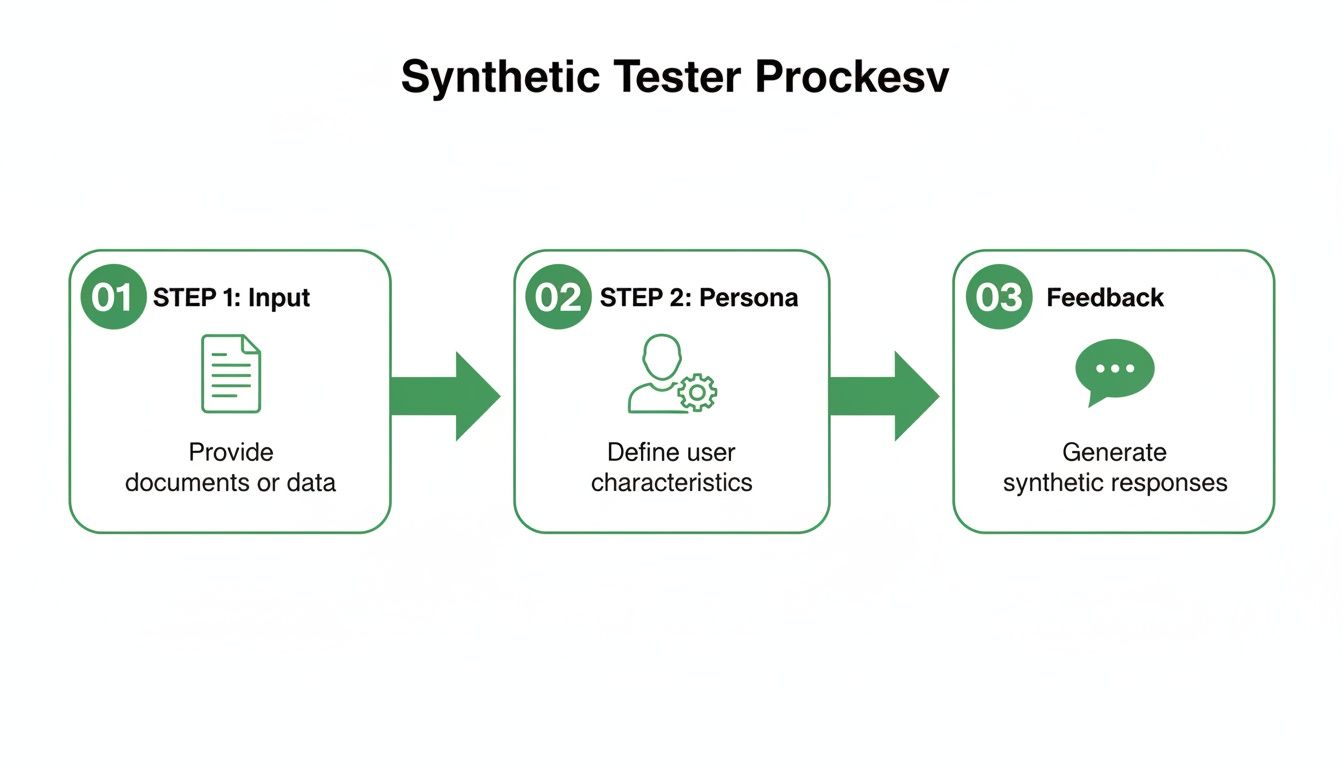

This simple but powerful three-step process is broken down in the flowchart below.

It shows how a design input is combined with a specific AI persona to produce actionable user feedback.

You might hear it say something like, “I’m looking for shipping information, but all I see are marketing banners. This is frustrating.” This constant stream of qualitative feedback gives you incredible insight into the user’s thought process. A key part of how synthetic testers can process information and interact with a UI is through their use of agentic workflows for automated tasks. This gives them the autonomy to pursue goals with a flexibility that mirrors human problem-solving.

Platforms like Uxia handle this entire pipeline for you, from creating the personas to interpreting the think-aloud narratives. The system automatically analyses the transcripts, flags recurring friction points, and summarises the key findings into a report you can actually use. This makes what was once complex AI technology accessible to any UX professional, no matter their technical background.

The Practical Benefits for Modern Product Teams

Understanding the theory behind synthetic testers is one thing. But seeing how they deliver real-world business value is where it all clicks. For modern product teams, the benefits aren't just small improvements—they completely change how fast and how well you can build products.

The most obvious advantage is pure speed. Traditional user testing is notoriously slow. Recruitment and scheduling can easily eat up weeks. Synthetic testing shrinks that entire process down to a matter of minutes. This unlocks genuine continuous iteration, turning feedback into a daily workflow instead of a slow, periodic checkpoint.

Achieve Unprecedented Scale and Consistency

Beyond speed, synthetic testers give you incredible scale. Instead of gathering feedback from a small panel of five or ten people, you can run tests with thousands of unique AI personas at the same time. This is how you uncover broad usability patterns and those critical edge cases that small human groups would almost certainly miss.

Better yet, platforms like Uxia deliver feedback that is both unbiased and perfectly consistent. Human testers can be influenced by previous tests, their mood, or even what they had for lunch. AI agents, on the other hand, execute their tasks with the exact same objective criteria, every single time. This eliminates the "professional tester" problem, where participants get too familiar with testing processes, and ensures your data is clean and reliable, 24/7.

Practical Recommendation: Imagine this: a startup needs to pick the best onboarding flow out of five different designs. Instead of spending weeks coordinating human tests, they run all five versions overnight with Uxia. They walk into their morning stand-up with a clear, data-backed winner, complete with transcripts and usability reports for each version.

Drastic Cost Reduction and Resource Optimisation

The third major win is significant cost savings. When you remove the need for human participants, you also eliminate a bunch of expensive and time-consuming tasks. There are no more recruitment fees, scheduling headaches, or user incentives to juggle. This massively lowers the financial barrier to user research, making comprehensive testing accessible for teams of any size or budget.

This cost-effectiveness is driving adoption across all sorts of industries. The European synthetic monitoring market is growing fast as businesses realise these advantages. For instance, EU firms are reporting 70% faster feedback loops using synthetic methods compared to traditional recruiting, which often took four to six weeks. This shift empowers enterprise teams to validate everything from trust signals to microcopy iteratively, slashing the need for post-launch fixes by as much as 50%. You can dig into more data on this trend in this market analysis by Fortune Business Insights.

By automating the repetitive parts of usability analysis, synthetic testers free up your human researchers to focus on higher-value work. They can dedicate their time to strategic initiatives, deep ethnographic studies, and complex problem-solving—the tasks that truly require human empathy and insight. This lets you achieve a whole lot more with the same team. If you're curious how AI testers can help you meet industry research standards, you might like our guide on using NN/g research to achieve 98% usability issue detection.

How to Implement Synthetic Testers in Your Workflow

Bringing synthetic testers into your design process is a lot more straightforward than you might imagine. It’s all about adding a new, incredibly fast feedback loop that slots right into the work you’re already doing.

With a platform like Uxia, you can get from a static design to actionable insights in just a few minutes. A task that used to take days or weeks can now be finished before your next coffee break.

This simple, four-step playbook is designed to be completely intuitive. Anyone on your product team can run a sophisticated usability test without needing a degree in data science. It’s about turning good ideas into decisions backed by solid data.

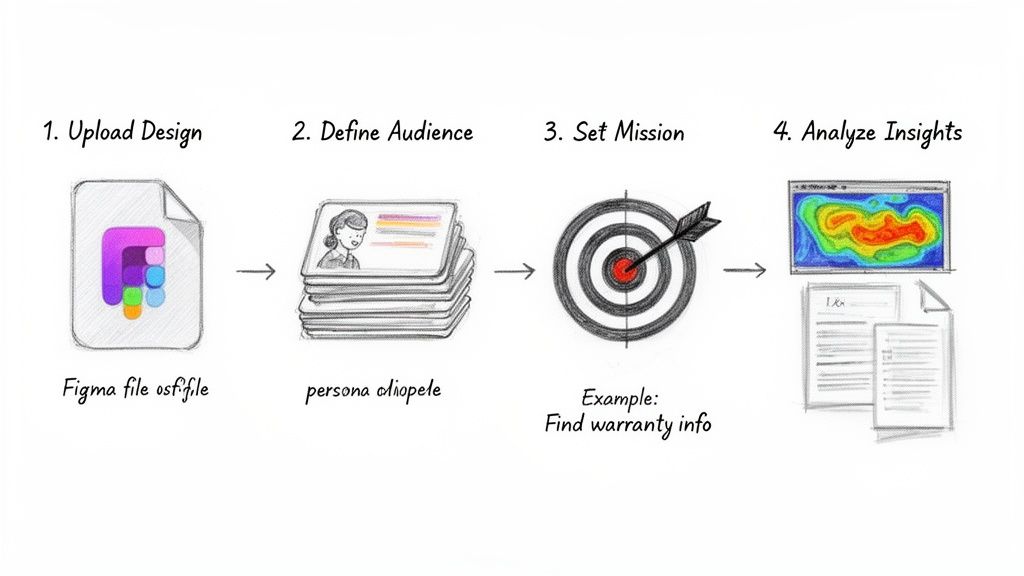

Step 1: Upload Your Design

The whole journey starts with your design. The first step is as simple as getting your work into the testing environment. You don't need a fully coded, high-fidelity product; in fact, testing early-stage assets is often where you'll find the most value.

Platforms like Uxia are built for flexibility, letting you test a variety of formats:

A Figma prototype: This is perfect for testing interactive flows and user journeys with multiple steps.

A simple screenshot: Got a new homepage layout or pricing page? A single image is all you need for rapid feedback.

A live URL: You can point the synthetic testers to a staging environment or even a live page to analyse its current performance.

Practical Recommendation: Start by testing low-fidelity wireframes or even simple screenshots. This allows you to catch major usability issues with minimal design effort, de-risking concepts before you invest heavily in high-fidelity mockups.

Step 2: Define Your Audience

Once your design is in, you need to tell the AI who it should be. This is where you build the personas for your synthetic testers. A common mistake here is stopping at basic demographics like age and location. The real magic happens when you layer behavioural traits on top.

Using a tool like Uxia, you can build nuanced personas that reflect how real users actually think. So, instead of just testing with a '35-year-old from Barcelona', you can define them as a '35-year-old from Barcelona who is sceptical about online payments and always looks for social proof before buying'.

This behavioural layer is what makes the feedback so powerful. It turns a generic profile into a cognitive model that guides the AI's every move and thought process, giving you insights that are far more realistic and useful.

Step 3: Set a Clear Mission

You've got your design and your audience. Now, give them a job to do. This is the 'mission'—the task you want your synthetic testers to accomplish. Writing a good mission is a bit of an art; you need to be specific enough to guide them but open enough to allow for natural exploration.

Practical Recommendation: A strong mission sets a clear goal without being too prescriptive.

Good: "Find the warranty information for a laptop and see how long it lasts."

Less Effective: "Click the support button, then click FAQs, then scroll to the warranty section."

The first prompt gives the AI a clear objective but lets it figure out how to get there. This is how you uncover unexpected navigation problems. The second example is just a rigid script that won't reveal any real user friction.

Step 4: Analyse the Insights

This is where all that speed pays off. As soon as the synthetic testers complete their mission, a platform like Uxia organises the findings into a clean, digestible report. No more wading through hours of video recordings. You get a prioritised summary of what went wrong, instantly.

The analysis usually includes:

Flagged Usability Issues: The system automatically flags every point of friction, confusion, or outright failure.

Annotated Screenshots: You get visual proof showing exactly where on the screen an issue happened.

AI-Summarised Reports: High-level takeaways and patterns are identified for you, saving you hours of manual analysis.

The goal here is to turn this raw data into a clear action plan. By seeing the biggest friction points highlighted automatically, your team can quickly create a prioritised list of design fixes to tackle in the very next sprint.

If you're exploring different AI-driven testing options, you might find our roundup of the best tools for AI testing useful for more context.

Understanding the Limitations and Ethical Questions

While the speed and scale of synthetic testers are impressive, it's crucial to have a clear-eyed view of the technology. You need to understand what they are and, just as importantly, what they aren't. They are not a one-for-one replacement for human researchers.

Instead, their real power comes from augmenting your existing research process. Synthetic testers are brilliant at specific, high-volume tasks. Think early-stage validation, rapid functional testing, and mapping out broad usability patterns across countless user journeys.

But they can't replicate genuine human emotion or uncover the surprising 'aha!' moments that pop up in deep, empathetic conversations. A synthetic tester won't ever share a personal story that completely reframes how you see a problem. That deeply human connection remains the exclusive domain of skilled researchers.

Addressing Data Validity and Trust

A fair and common question teams ask is: how can we trust the AI’s feedback? We tackle this concern at Uxia through rigorous validation studies where we benchmark the outputs from AI agents against the results from traditional human testing. The goal isn't for the AI to perfectly mimic one specific person, but to accurately model the common behaviours and friction points we see across a target user group.

This focus on privacy and validity has driven significant adoption, particularly in Europe. Strict regulations like GDPR have actually accelerated the growth of the European synthetic data generation market, which is projected to claim a substantial revenue share by 2034. As companies adopt these privacy-compliant tools, research shows that bias from human testers can drop by as much as 40%, leading to more confident and reliable design iterations. You can explore the full report on the growth of the synthetic data generation market for all the details.

The relationship between synthetic and human testers isn't a competition. You can learn more about how they work together by comparing synthetic users vs human users in our detailed guide.

Practical Recommendation: The key takeaway is that synthetic testers empower your research team, they don’t replace it. They act as a force multiplier, handling the repetitive, time-consuming tasks that bog down workflows.

This strategic partnership frees up your human experts to focus on the complex, empathy-driven work that AI simply can't do. A platform like Uxia manages the high-volume analysis, letting your team dedicate their valuable time to strategic planning, deep user interviews, and creative problem-solving. It’s about letting a small team achieve a massive impact.

Common Questions About Synthetic Testers

Whenever a new technology comes along, it’s natural to have questions. Getting your head around synthetic testers is the first step to figuring out how they can actually help your team and where they fit into your existing workflow.

Let’s run through some of the most common questions we hear from UX teams dipping their toes into this for the first time.

Are Synthetic Testers Just Glorified Bots?

Not at all—they’re a completely different beast. A bot is just a script. It follows a rigid, pre-programmed set of instructions, blindly clicking from point A to point B without any real awareness of what it's doing.

A synthetic tester on a platform like Uxia, on the other hand, operates using a cognitive model that simulates human thought. It uses computer vision to ‘see’ the interface and an LLM-powered persona to ‘think’ and decide what to do next. This lets it navigate, interpret content, and react to what it finds, including unexpected dead ends or confusing layouts. They don’t just follow a path; they simulate the reasoning that creates one.

How Can I Trust the Feedback from an AI?

Trust comes from validation, and that's exactly what these models are built on. The AI that powers synthetic testers is trained on enormous datasets of real human-computer interactions. More importantly, platforms like Uxia are constantly benchmarking their synthetic results against actual human test outcomes. This ensures the insights you get are realistic and genuinely useful.

Practical Recommendation: The goal isn’t to perfectly mimic every single whim of one specific person. It's about accurately modelling the common behaviours, thought processes, and friction points of an entire target audience, but at a massive scale. This gives you reliable patterns you can actually act on.

Will Synthetic Testers Replace My UX Research Team?

Absolutely not. The whole point of synthetic testers is to empower your research team, not make it obsolete. Think of it as a tool for automation. It takes on the most repetitive, time-sucking parts of usability testing—like early-stage design screening and constant iterative checks.

This frees up your human researchers to focus on the high-value, strategic work that only a person can do. We're talking about deep empathy, complex problem-solving, and creative insights. It’s all about letting a small team deliver a massive impact. With a platform like Uxia, your experts are amplified, not replaced.

How Do I Get Started with Synthetic Testers?

The best way is to start small and weave them into your process step-by-step. A great first move on a platform like Uxia is to run a test on a design you’ve already validated with human users.

Comparing the AI-generated results with your human-based findings is a fantastic way to build confidence and see exactly where the two methods align. Another easy win is running quick tests on new wireframes during a design sprint. You get valuable feedback in hours, not weeks, which helps you de-risk concepts early and make smarter decisions much faster.

Ready to accelerate your design process with AI-driven feedback? Join hundreds of teams building with confidence on Uxia. Start testing your designs in minutes and get your first report for free.