Hybrid UX Research: The Ultimate Guide to Blending AI Speed with Human Insight

Discover how hybrid ux research blends AI speed with human empathy to accelerate product development and de-risk launches.

Hybrid UX research is a strategic approach that blends the lightning-fast scale of AI-driven methods with the deep, empathetic insights you can only get from human-led studies.

This powerful combination allows product teams to validate ideas in minutes, slash development risks, and still capture the essential ‘why’ behind user behaviour. The result is a research cycle that’s both more efficient and far more complete.

The Shift to Hybrid UX Research

Are you staring down long recruitment cycles, steep costs, and research timelines that just can’t keep up? You're not alone. The traditional way of doing UX research, while valuable, often creates friction with the fast pace of modern, agile product development.

This constant struggle has sparked a major shift in the industry. Teams can no longer afford to wait weeks for feedback that might derail an entire sprint. This is why hybrid UX research is quickly moving from a niche trend to a core strategy for high-performing teams.

Blending Human Insight with AI Speed

Think of this new model like a master chef who pairs timeless culinary skills with advanced kitchen tech. The chef’s expertise and palate (human insight) are irreplaceable for creating an exceptional dish. But high-tech ovens and precision tools (AI analysis) let them achieve perfection faster and more consistently.

In the UX world, that means combining the deep, empathetic understanding from human interaction with the incredible speed and scale of AI. This is the entire philosophy behind our platform, Uxia. We firmly believe in hybrid research. AI isn't here to replace researchers—it’s here to supercharge them by optimizing research flows.

Practical Recommendation: In Uxia we believe that AI should help to optimize research flows and speed up product development reducing risks. However, there is still value in real human insight. We position as a complement and believe in hybrid research where synthetic can provide very good early validation fast but sometimes, when more validation is needed, human insights help close the research loop. Borja Díaz-Roig, Uxia's CEO and Co-founder

This shift is happening everywhere. The global UX market is projected to soar from $3.5 billion in 2026 to $9.8 billion by 2034, with Europe playing a central role. A recent State of User Research report revealed that in Spain, 85% of UX researchers already favour mixed methods, frequently combining generative interviews with evaluative usability tests.

A hybrid model, where AI enhances human-led studies, directly solves the biggest pain points like costly recruiting delays and slow turnarounds. You can explore more data on the UX market growth and trends to see the full picture.

Why the Traditional Approach Is Falling Behind

Let’s take a quick look at the core differences between old and new.

Traditional vs Hybrid UX Research At a Glance

Aspect | Traditional UX Research | Hybrid UX Research |

|---|---|---|

Speed | Slow (weeks or months) | Fast (minutes or days) |

Cost | High (recruiting, incentives) | Low (automated, scalable) |

Scope | Limited by budget and time | Broad and iterative |

It’s clear that sticking to the old ways comes with real-world problems.

The classic research approach, while thorough, presents several challenges in today's fast-moving environment:

Time-Consuming Recruitment: Finding, screening, and scheduling qualified participants can take weeks, creating a huge bottleneck.

High Costs: Participant compensation, platform fees, and researcher time add up fast, especially for larger studies.

Slow Time-to-Insight: The entire process—from planning to synthesis—can drag on, delaying key product decisions and killing development momentum.

A hybrid approach tackles these issues head-on. For instance, using a platform like Uxia, you can get initial feedback from synthetic users in minutes, not weeks. This gives you incredibly valuable early validation—often enough to ship with confidence.

When you need more depth, that same AI-driven data helps you run smaller, more focused human studies, closing the research loop while saving a massive amount of time and money.

What Exactly Is Hybrid UX Research?

So, what are we really talking about when we say hybrid UX research? At its heart, it’s a smart way to combine different research methods to get a complete picture of the user experience—and get it much, much faster. This isn’t just about mixing qualitative (‘the why’) and quantitative (‘the what’) anymore.

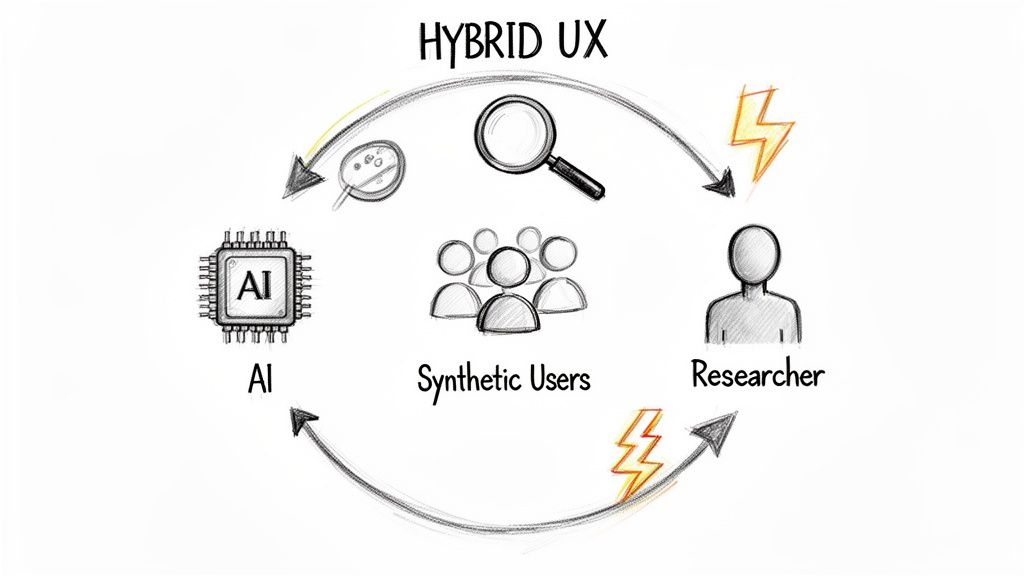

The modern version of hybrid UX research is a powerful, continuous loop where AI-driven insights and human expertise feed directly into each other. It’s about using the best of both worlds—machine speed and human nuance—to make better decisions at every step of product development.

This isn't just a trendy new term; it’s a direct response to how we build products and even how we work. The shift to hybrid work schedules across Europe has pushed UX teams to adopt more agile, remote-friendly, and AI-powered models. In 2025, 43% of UX researchers reported working a hybrid schedule with one to four remote days a week, a massive jump from only 21% in 2024. A Nielsen Norman Group report also found that 65% of UX teams are now experimenting with AI for tasks like sentiment analysis, cutting their time-to-insight by up to 50%.

The New Feedback Loop: AI + Human Insight

Imagine you’re ready to launch a new feature. The old way meant spending weeks recruiting participants for a big usability study, only to find critical flaws when it’s almost too late. A hybrid approach flips this entire process on its head for maximum efficiency.

With a platform like Uxia, you can start by running your prototypes past synthetic users. These AI-powered participants navigate your designs in minutes, giving you instant feedback on confusing copy, usability snags, and points of friction. You get actionable insights in hours, not weeks.

This is where the “hybrid” model really comes to life. The initial data you get from AI testing creates a laser-focused starting point for your human research.

From Broad Questions to Targeted Exploration

Instead of starting user interviews with vague questions like, “So, what do you think of the design?”, you can go in armed with specific issues that the AI has already flagged.

For instance, your Uxia report might show that 80% of synthetic users couldn’t find the “export” button. Now, your follow-up human research can focus entirely on digging into why that specific button is a problem.

Practical Recommendation: The core of Uxia’s philosophy is to use AI for rapid, early validation so you can ship with confidence. For many features, this is all you need. When deeper insights are required, you can bring in targeted human research to understand the complex emotions, motivations, and context that only people can provide. Borja Diaz-Roig, Uxia's Co-founder & CEO

This method completely transforms research from a slow, discovery-led slog into a fast, validation-driven engine.

AI-Powered Testing (with Uxia): Quickly uncovers usability bottlenecks, confusing navigation, and unclear messaging at scale.

Human-Led Interviews: Dive deep into the specific problems found by the AI, exploring the nuanced human emotions and thought processes behind them.

This combination lets you de-risk product decisions in record time while still capturing the rich, qualitative insights needed to build products people genuinely love. It’s the perfect balance between machine efficiency and human empathy. If you want to dig deeper into the differences, check out our guide on synthetic users vs human users. This powerful duo is what makes hybrid UX research a game-changer for modern product teams.

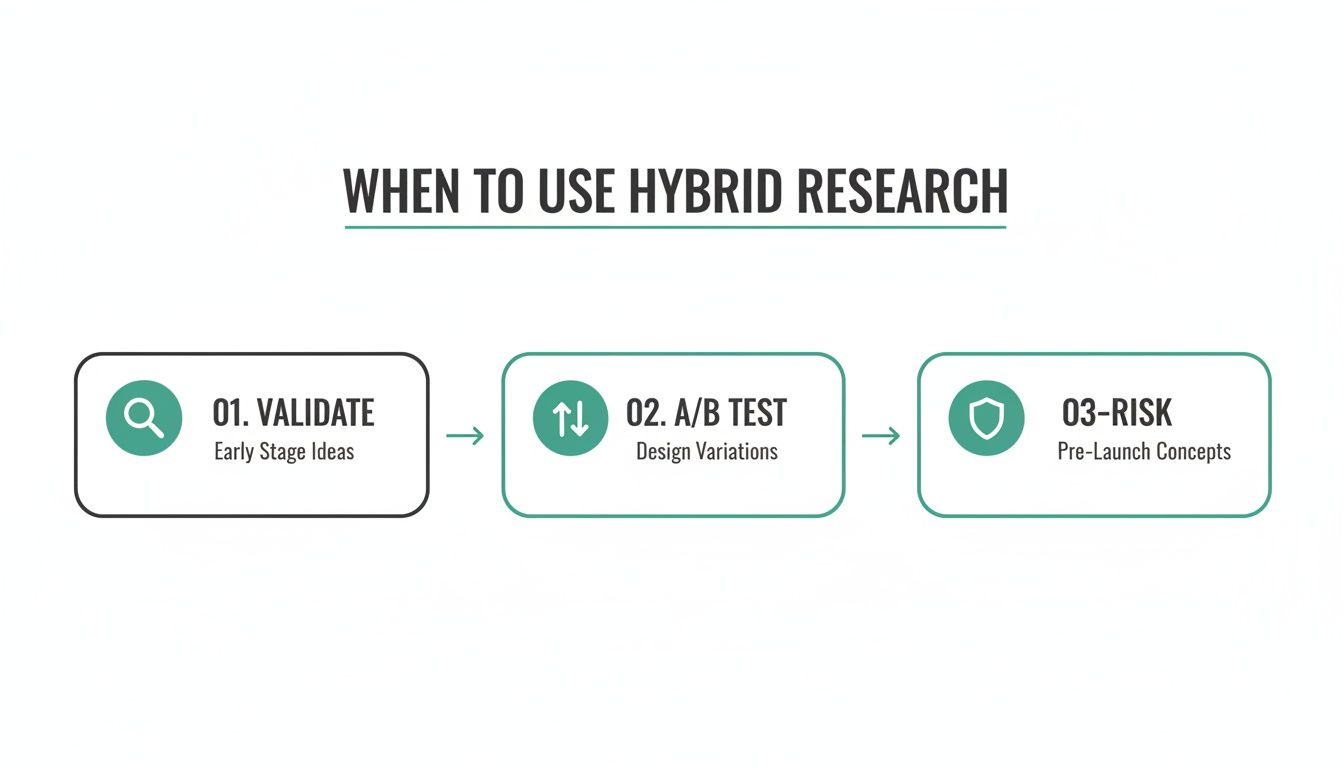

When to Use a Hybrid Research Approach

Knowing when to go hybrid is just as critical as knowing how. Hybrid UX research isn't a one-size-fits-all solution you slap onto every project. It's about being clever and strategic, picking the exact moments where it will give you the most leverage.

Think of it as a practical choice for product teams caught between the need for speed and the demand for deep, meaningful insights. It’s perfect for those high-stakes scenarios where you can’t afford to be wrong, but you also can’t afford to be slow.

So, where does this approach really make a difference? It shines brightest at key moments in the product lifecycle where you need both rapid feedback and a solid grasp of user behaviour. A hybrid workflow becomes your secret weapon, letting you move fast without flying blind.

For Early-Stage Discovery and Concept Validation

In the beginning, ideas are cheap, plentiful, and fragile. The main goal is to weed out the dead ends from the promising concepts as fast as humanly possible, long before you commit any real development resources. This is prime territory for a hybrid model.

Practical Recommendation: Start by throwing rough mock-ups into a platform like Uxia to test with synthetic users. In minutes, you’ll get a high-level read on your core value proposition and whether the initial flow makes any sense. This isn't about nuance; it's about quick validation to help you iterate. Once the AI has helped you filter down to one or two strong concepts, you bring in the humans. A few in-depth interviews with real users will uncover the why—their underlying needs, emotional reactions, and the subtle context that an algorithm can't capture.

To Rapidly Test and Iterate on Designs

Imagine your team is stuck between two or three competing designs for a new feature. The traditional route—building them all out for a live A/B test—is a massive drain on engineering time and resources. A hybrid workflow offers a much smarter path.

Instead, you can run all the design variations through Uxia's synthetic user testing at the same time. The platform will almost instantly spit back comparative data on crucial metrics:

Task Success Rates: Which design actually helps users get the job done?

Friction Points: Where are people getting stuck or confused in each version?

Clarity of Copy: Is the language in one design resonating better than the others?

This gives you a clear winner based on usability data, not just team opinion. From there, you can follow up with a small, targeted round of human testing to confirm the findings and gather richer qualitative feedback on the winning design before you commit it to code. If you want more ideas, we've got a great article covering practical use cases for synthetic user testing.

Before a Major Feature Launch to De-Risk Rollout

There’s nothing more nerve-wracking than a big launch. A critical bug or a major usability flaw that slips through the cracks can torpedo user trust and send your team into a costly, all-hands-on-deck emergency. A hybrid approach is your final line of defence.

Practical Recommendation: Before a big launch, use AI testing on Uxia to quickly catch and fix critical usability flaws, ensuring a smoother release. After launch, follow up with human-led interviews to understand real-world adoption and user satisfaction.

This two-step process gives you the best of both worlds—speed and depth—and dramatically lowers the odds of a failed launch. The AI acts as a wide net, catching functional issues and obvious friction points. The human follow-up confirms the feature actually solves a real-world problem and meets your customers' expectations.

This blend of AI and human insight is rapidly becoming standard practice. For example, Spain's Digital Decade 2026 report noted that 9.2% of Spanish enterprises had adopted AI by 2025, already putting them ahead of the EU average. You can read more on the country's AI roadmap and how it’s shaping business. By letting a tool like Uxia handle the rapid, scalable analysis, you free up your human researchers to focus on what they do best: tackling the strategic, high-impact questions that define a product's long-term success.

Building Your First Hybrid UX Research Workflow

Ready to get your hands dirty? Creating your first hybrid UX research workflow is simpler than it sounds. The secret is blending the raw speed of AI with the irreplaceable depth of human insight.

It’s all about building a repeatable blueprint that gets you answers faster, de-risks your roadmap, and helps you ship better products. This workflow uses AI-driven insights to set the stage perfectly for focused, meaningful conversations with real users.

Let's walk through the steps.

Step 1: Start With a Core Research Question

Every great study starts with a single, focused question. Before you even think about tools, define what you absolutely need to learn.

Are you trying to validate a new feature? Figure out why a key metric is tanking? A strong research question is your north star.

Good examples look like this:

"Can a first-time user get through our new onboarding flow without help?"

"Which of these three dashboard layouts feels most intuitive to our power users?"

"Why are people abandoning their carts after adding this specific product?"

With a clear question, you can point both your AI and human research in the same direction to get a single, cohesive answer.

Step 2: Run Fast, Broad Tests With AI

This is where you hit the accelerator. Instead of spending days recruiting human testers, start with a rapid, broad analysis using an AI tool. For testing designs and prototypes, a platform like Uxia is built for exactly this.

Just upload your designs, define the user task from your research question, and let synthetic users go to work. In minutes, you’ll have a pile of data, including:

Heatmaps showing exactly where users looked.

Transcripts of AI users "thinking aloud" as they navigated the flow.

A clear list of friction points, usability snags, and confusing copy.

Think of this as a powerful first filter. It highlights the biggest problems immediately, without the time and expense of manual recruiting. To see this in action, you can learn more about our AI-driven workflows for synthetic user testing.

The flowchart below shows how this hybrid approach works in practice to validate ideas, test designs, and de-risk your decisions before launch.

By front-loading your process with AI, you get the initial data you need to move forward with confidence while systematically killing risk.

Step 3: Form Sharp Hypotheses From AI Insights

Now that you have the AI-generated data, it’s time to find the patterns. Did most synthetic users get stuck on the same button? Did the “think-aloud” transcripts repeatedly mention confusion over a specific label?

Use these findings to move from a vague hunch to a sharp, testable hypothesis.

For instance, instead of guessing, you can state: "We believe users are abandoning the checkout because the 'Apply Discount' field isn't visible enough, which causes frustration and makes them leave."

This hypothesis isn't a shot in the dark—it's directly informed by data, and it gives your next phase of research a crystal-clear purpose.

Step 4: Conduct Focused Human-Led Sessions

It’s time to bring in the humans. The real beauty of a hybrid workflow is that you no longer need a huge, expensive study to get answers.

The AI has already told you the what and the where. Now you just need to recruit a small, relevant group of real users—often just five is enough—to find out the why.

Your qualitative sessions become laser-focused. You’re no longer on a fishing expedition. Instead, you can use your hypotheses to guide the conversation:

"Show me how you would apply a discount on this page."

"I noticed you paused there for a second. What was going through your mind?"

"What did you expect to happen when you clicked that button?"

Practical Recommendation: A hybrid model doesn't aim to replace human insight; it elevates it. By using AI to handle the broad, repetitive analysis, you free up your researchers to focus on the complex human emotions, motivations, and context that drive behaviour.

To keep things moving, smart tools can make a huge difference. For example, using AI transcription software for interviews can dramatically speed up the process of turning recorded sessions into searchable text, helping you pull out key themes in a fraction of the time.

Step 5: Synthesise All Data for a Complete View

This is where it all comes together. Your final step is to merge the quantitative findings from your Uxia tests (like task success rates and friction scores) with the rich qualitative insights from your human interviews.

Look for the overlaps. If your AI test flagged a confusing button and your human interviews confirmed that users found its label misleading, you have a high-confidence, actionable insight.

This unified view gives you a 360-degree understanding of the problem and the evidence you need to push a solution forward.

Sample Hybrid Research Plan Template

To help you put this into practice, here is a simple template your team can use to plan out a hybrid research sprint. It helps ensure everyone is aligned on the objectives, methods, and what you expect to learn at each stage.

Phase | Objective | Method (Tool) | Output/Metric |

|---|---|---|---|

1. AI-Driven Analysis | Identify major usability issues and friction points in the new checkout flow. | Uxia Synthetic User Testing | Heatmaps, click paths, think-aloud transcripts, friction score. |

2. Hypothesis Generation | Form 2-3 specific hypotheses based on patterns in the AI data. | Team Analysis Session | A list of prioritised, testable hypotheses. |

3. Human Validation | Explore the why behind the top usability issue identified by the AI. | Moderated User Interviews (5 users) | Verbatim quotes, emotional feedback, task success confirmation. |

4. Synthesis & Action | Combine qual/quant data to create a final report with clear recommendations. | Miro Board / Notion Doc | A prioritised list of design changes for the next sprint. |

This structured approach ensures your research is not only fast and efficient but also deeply insightful, giving you the best of both worlds.

Hybrid Research in Action: A Uxia Case Study

Theory is one thing, but seeing hybrid UX research in the wild is where it really clicks. Let’s walk through a real-world scenario to show how blending AI and human insight delivers tangible results.

Meet ‘ConnectApp,’ a startup building a new onboarding flow for their social networking app. Like a lot of young companies, they were running against tight deadlines and an even tighter budget. A long, traditional usability study was completely off the table, but they couldn’t afford to launch such a critical feature blind.

This is where they turned to a hybrid approach, using our platform, Uxia, to spearhead the effort. Their goal was straightforward: validate their new onboarding design and ship a polished experience, all within a single two-week sprint.

The First 24 Hours: Synthetic User Testing

ConnectApp’s design team came up with three different versions of the onboarding flow. Instead of getting bogged down in a lengthy A/B test or days of moderated sessions, they simply uploaded all three prototypes into Uxia.

Within a few hours, they had a full analysis. The results came back fast and were incredibly clear.

Critical Navigation Flaw: Uxia’s synthetic users almost immediately found a major dead-end in two of the three designs. People were getting stuck after the second step with no obvious way to move forward.

Confusing Microcopy: While the third design’s layout was solid, the AI’s “think-aloud” transcripts showed that the copy on the final call-to-action button was unclear and causing hesitation.

Armed with this data, the ConnectApp team knew right away which two designs to scrap. They didn’t waste a single minute debating opinions or scheduling more meetings. The AI gave them a clear, data-driven path forward and saved them from chasing flawed concepts.

The Human Touch: Closing the Loop

With a clear winner, the team quickly tweaked the confusing microcopy based on the AI’s feedback. Now, they were ready for the second phase of their hybrid plan: human validation.

Instead of recruiting a big, expensive panel of testers for a broad study, they knew exactly what they needed to dig into. Guided by Uxia’s findings, they set up just five moderated interviews with real users from their target audience.

At Uxia, we believe in this exact model. AI helps optimise research flows and speeds up development by providing fast, early validation. Sometimes that's enough to ship, but when it's not, targeted human validation helps close the research loop.

During these brief sessions, the researcher was able to ask questions with surgical precision. They weren't asking, "So, what do you think?" They were asking, "Tell me what you expected to happen when you saw this button."

The feedback from those five users confirmed what the AI had found. The navigation flow felt smooth and intuitive, and the revised copy was now perfectly clear. They had successfully validated their design by combining the scale of AI with the irreplaceable nuance of human insight.

The Final Result: Speed and Confidence

By embracing a hybrid research model, ConnectApp achieved something remarkable. They went from three competing designs to a single, polished, and user-validated onboarding flow in just one sprint.

The practical benefits were huge:

Time Saved: They squeezed weeks of traditional research into just a few days.

Budget Optimised: They sidestepped the high cost of a large-scale usability study, needing only a handful of targeted interviews.

Risk Reduced: They launched with high confidence, knowing their design was vetted for both usability problems and contextual understanding.

This case study is a perfect illustration of how hybrid UX research, powered by a tool like Uxia, isn't just a concept—it's a practical, effective strategy for building better products, faster.

Measuring Success and Avoiding Common Pitfalls

A great strategy needs great metrics. If you’re going to bring hybrid UX research into your workflow, you need to show it’s actually working. That means moving past gut feelings and tracking the numbers that prove its value to the product development cycle.

Start with Time-to-Insight. How fast can you get from a research question to a real, actionable answer? A hybrid approach should shrink this down from weeks to just days—or even hours—especially when you’re using a platform like Uxia for that initial burst of testing.

Then look at your Research Cost per Sprint. By swapping out big, pricey human panels for quick AI analysis and smaller, focused human sessions, your total spend should drop. Also, keep an eye on the number of critical issues caught pre-launch. This is a direct measure of how hybrid methods are de-risking your roadmap.

Common Pitfalls and How to Sidestep Them

A hybrid model is powerful, but it's not foolproof. Knowing the common tripwires is the best way to make sure your research stays balanced and effective.

The biggest mistake? Relying too much on AI and missing the human nuance. AI tools like Uxia are brilliant for speed and finding usability issues at scale. But they can’t capture the messy, complex emotions, cultural context, or deep-seated motivations that actually drive people.

Practical Recommendation: The Uxia philosophy is built on balance. AI is a powerful partner that provides speed and scale, but it works best when complementing the irreplaceable value of human empathy and strategic insight.

To avoid this trap, always use AI findings as your launchpad, not your final destination. Use the data from Uxia to build sharp hypotheses, then bring in a handful of real users to dig into the why behind the what.

Failing to Integrate Your Findings

Another classic mistake is keeping your AI and human insights separate. If your synthetic user data lives in one place and your interview notes in another, you’re missing the whole point of hybrid research. The goal is a single, complete picture.

This is where knowing how to properly analyze qualitative data becomes essential for weaving everything together into a cohesive story.

A simple fix is to create a unified insights repository. Use a tool like Miro or Notion to map AI-generated friction scores and heatmaps directly against the qualitative quotes from your human interviews. That’s how you unlock real product intelligence and start making decisions with confidence.

Your Hybrid UX Research Questions Answered

Whenever a new model like hybrid UX research comes along, it’s natural to have questions. We hear them all the time from teams making the switch. Let's get straight to the most common ones so you can move forward with total confidence.

Can AI Fully Replace Human Testers?

Not a chance—and we designed it that way. The real power comes from seeing AI and humans as partners, not competitors. A platform like Uxia gives you incredibly fast, reliable validation early on, which is often more than enough to de-risk a feature and get it shipped.

But when you need to understand deep-seated motivations or complex human emotions, there's no substitute for targeted, qualitative feedback from real people. Think of it this way: AI is brilliant at finding the "what," but humans are essential for uncovering the "why." Together, they give you the complete picture.

How Do I Convince My Team to Invest?

It all comes down to the return on investment (ROI). A hybrid approach doesn't just tweak your workflow; it completely transforms your efficiency. You're slashing the time it takes to get insights, cutting research costs by reducing the need for massive human studies, and making smarter product decisions that prevent costly mistakes down the line.

Using a tool like Uxia lets your team move faster and build with more confidence. It stops being an expense and becomes a direct investment in your product's quality and your team's velocity, which feeds right back into the bottom line.

Is This Model Viable for Small Teams or Freelancers?

Absolutely. In fact, it's almost tailor-made for them. For a small team, recruiting, managing, and paying a panel of human testers is a huge drain on time and money. Using an AI tool like Uxia for that initial heavy lifting is far more cost-effective.

It levels the playing field, giving smaller teams and solo operators access to powerful insights that were previously only possible with an enterprise-level budget.

Does Using AI in Research Introduce Bias?

It’s a fair question, but here’s the reality: it can actually reduce certain types of bias that plague traditional research. The synthetic participants on Uxia don't get tired, they don't have bad days, and they don't turn into "professional testers" who know exactly what you want to hear.

A well-designed hybrid plan takes this even further. By balancing the objective, pattern-based data from AI with the subjective, nuanced feedback from humans, you get a far more rounded and reliable view of the user experience.

Ready to see how a hybrid model can transform your workflow? With Uxia, you can get rapid, actionable insights from synthetic users in minutes. Start building better products faster and with more confidence. Try Uxia for free today.