Uxia on Lee & Lee UX Podcast: Why the Future of UX Research Is Hybrid

Discover how Uxia is redefining UX research with synthetic user testing, as discussed on the Lee & Lee UX Podcast with industry experts.

UX research has always been built on one simple truth: teams make better product decisions when they understand how people experience what they build.

But the way teams work has changed. Product cycles are faster. Design iterations happen weekly, sometimes daily. Experimentation teams need evidence before launch, not weeks after a research request enters the queue. And yet, traditional user testing still depends on recruiting participants, scheduling sessions, watching recordings, synthesising findings, and turning all of that into decisions.

That tension was at the centre of Uxia founders Borja Diaz-Roig and Victor Perdiguer joining Lee Cooper and Lee Duddell on the Lee & Lee UX Podcast (listen to the full episode here).

The conversation matters because Lee & Lee is not a generic tech podcast. It sits inside the UX research community. Lee Duddell, in particular, has long been associated with remote usability testing: he founded WhatUsersDo, a remote testing service used by major brands, and has repeatedly argued for moving teams away from hunch-based UX decisions and towards real user insight. Lee Cooper is an industry veteran with decades of experience in top UX players like UserTesting, UserZoom or Google.

That makes the discussion around synthetic user testing especially relevant. Uxia is not trying to replace the craft of UX research. It is asking a more practical question:

What if teams could get directional UX feedback in minutes, use it to remove obvious friction, and then reserve human research for the decisions where human depth matters most?

From traditional usability testing to AI-powered UX validation

Uxia was built around a clear product problem: user testing is valuable, but it is often too slow and expensive to use as frequently as modern product teams need.

On Uxia, teams can define a mission, provide a scenario, upload designs or prototypes, choose a target audience, and run tests with AI synthetic testers. These testers navigate the experience, surface UX friction, and generate structured feedback without the recruitment and scheduling overhead of traditional testing. Uxia’s public site describes the product as helping teams “validate your UX in minutes with AI testers” and positions the platform around high-quality UX/UI insights without recruiting participants.

That speed is not just a convenience. It changes where research can happen in the product process.

Instead of waiting until a prototype is polished enough to justify recruiting users, teams can run synthetic user testing earlier: during concept validation, design iteration, or pre-launch QA. The result is a faster loop:

Create or update a product flow.

Run AI user testing with synthetic testers.

Identify friction, confusion, and accessibility issues.

Fix the design.

Re-test before involving human participants.

Internally, we describe this as a hybrid research model: synthetic data is strongest for early iterations, fast validation, and directional insights, while human research closes the loop where context, motivation, and real-world nuance are essential.

Why Lee & Lee was the right place for this conversation

Lee Cooper and Lee Duddell bring the kind of practitioner perspective that matters when discussing AI in UX research.

This is not a debate about AI in the abstract. It is a debate about whether new research methods can help teams make better decisions without losing the discipline that made UX valuable in the first place.

Lee Cooper brings a strong blend of product, research, and innovation experience to the conversation. Based in London, he has been deeply involved in the evolution of UX research and AI-driven research tooling, contributing to discussions around how research systems need to adapt to modern product development. His work reflects a focus on scaling research impact across organisations such as Google or UserTesting and aligning UX insights with business strategy, as well as exploring emerging areas like AI-moderated research and synthetic users.

Lee Duddell’s background is especially relevant. WhatUsersDo was built around remote usability testing and “thinking aloud” user feedback, giving organisations access to real users so they could see what people did, hear what they thought, and make product decisions based on evidence rather than internal opinion.

That history creates a useful bridge to Uxia’s approach.

Traditional remote user testing gave teams a way to watch real users experience a product. Uxia extends that logic into an AI-native workflow: synthetic testers interact with designs, produce transcripts, expose friction, and help teams understand where a product experience may break before human testing begins.

The point is not that synthetic testers are “better than users.” The point is that many product teams are currently forced to choose between moving fast and doing research. Synthetic user testing creates a third option: move fast with evidence, then validate deeply with humans when the decision requires it.

What synthetic testers are good at

Synthetic testers are particularly useful when teams need fast, structured feedback on product flows.

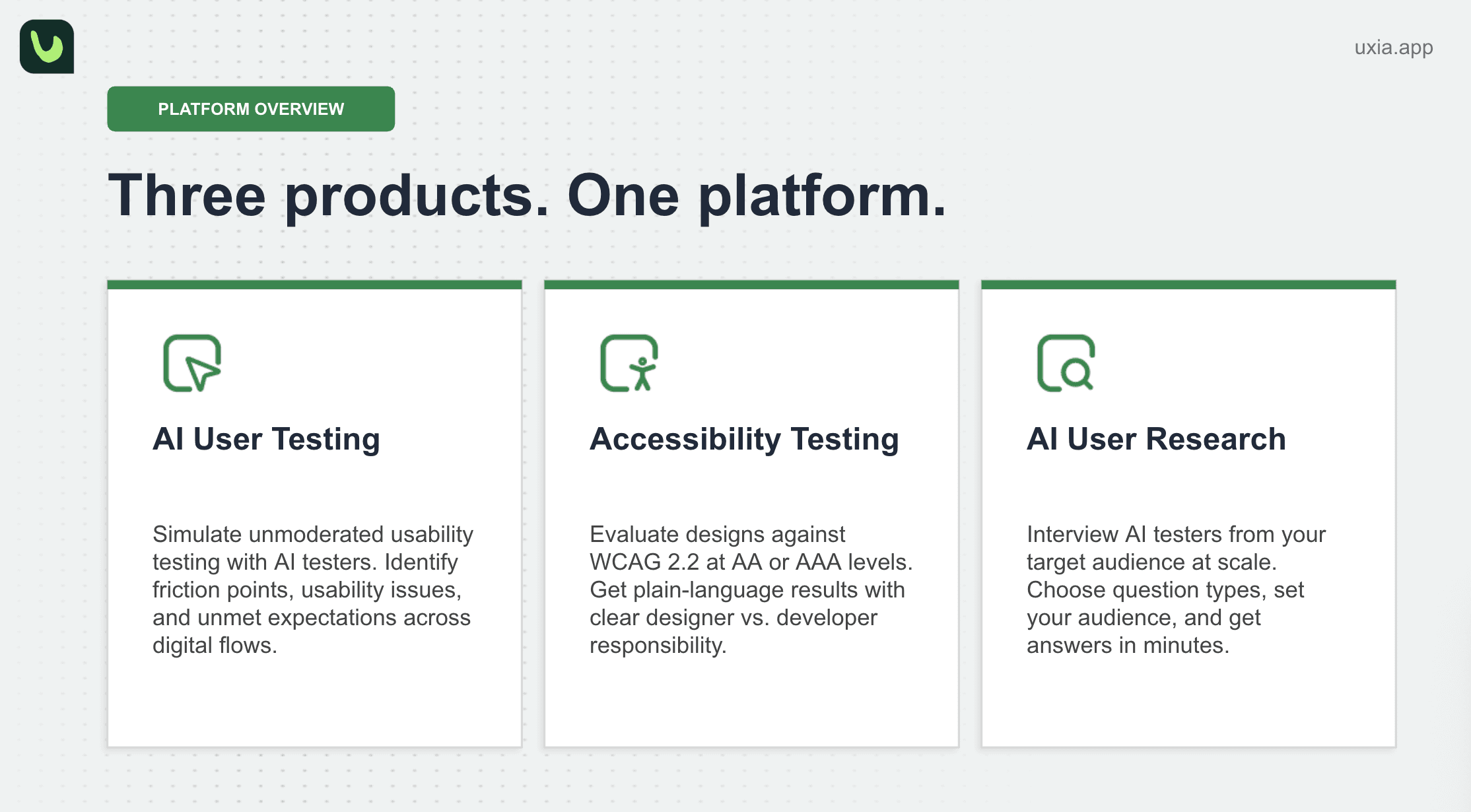

For example, Uxia’s platform can support unmoderated usability testing, accessibility testing, and AI user research. The core product started with usability testing: sharing a prototype with an audience, letting synthetic testers navigate it, and identifying usability issues. Uxia has since expanded into accessibility testing against WCAG standards and qualitative user research through synthetic-tester interviews.

That matters for teams working on:

Checkout flows

Onboarding journeys

SaaS dashboards

Mobile app navigation

Feature discovery

E-commerce conversion paths

Accessibility improvements

Early-stage product concepts

International or niche audience scenarios

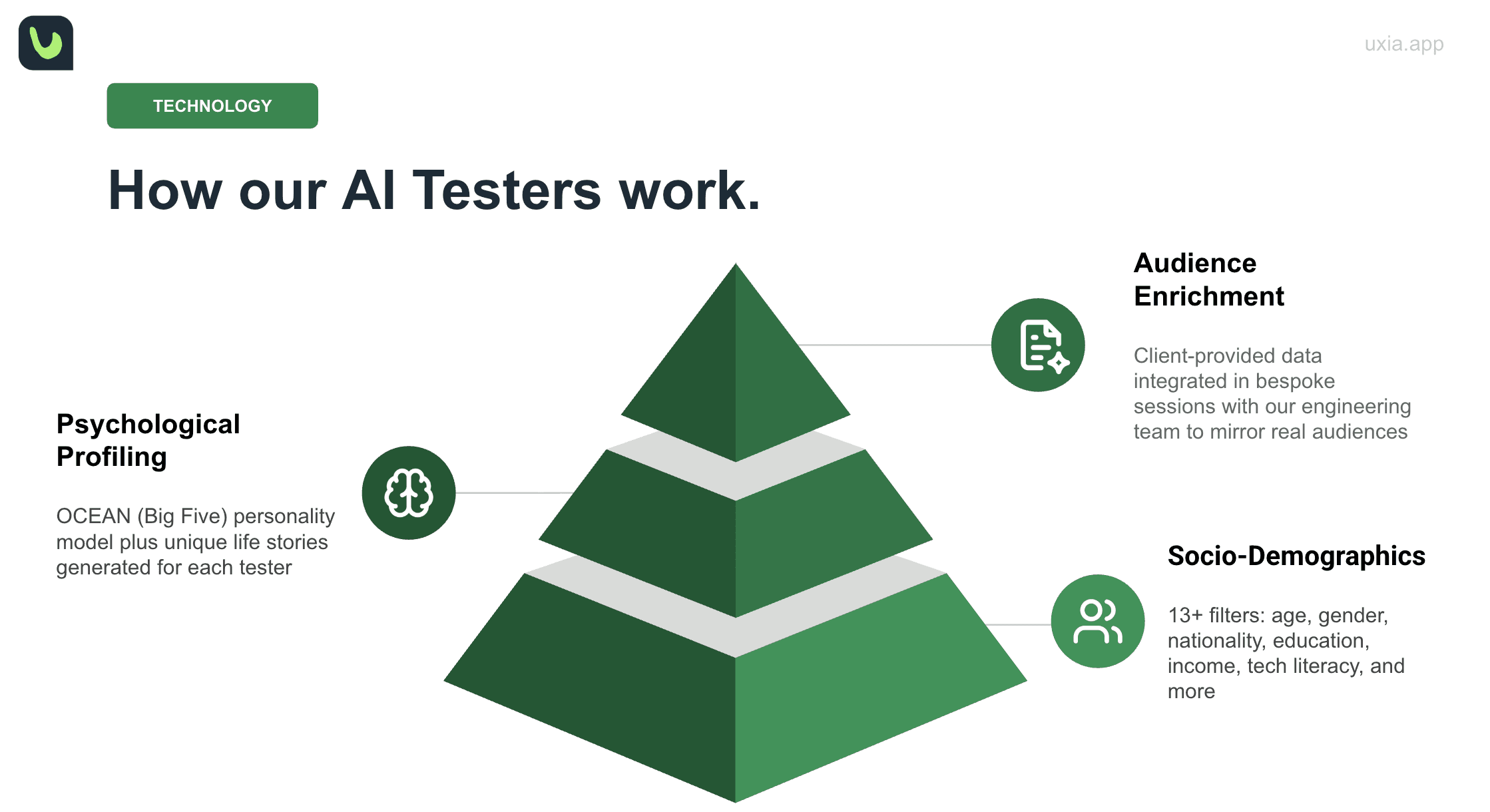

In Uxia, synthetic testers are not generic AI responses. The audience setup combines sociodemographic characteristics, behavioural or psychological dimensions, and enrichment from public or client-provided data. Internal demos describe this audience model as being built on three pillars: sociodemographic characteristics, a psychological profile based on the OCEAN framework, and enrichment using public or client-provided research data.

That enables a product team to ask a more precise question than “does this design work?”

They can ask:

How might this specific audience experience this specific mission in this specific context?

That is where synthetic user testing becomes valuable. It gives teams a way to test not just screens, but assumptions.

Why hybrid UX research matters

The strongest argument for synthetic user testing is not replacement. It is prioritisation.

Human research is still essential. People bring lived experience, emotion, social context, contradictions, workarounds, and unexpected behaviour. Those are not details to automate away. They are the reason UX research exists.

But human research time is limited. Recruiting the right people takes effort. Running sessions takes time. Synthesis takes attention. If researchers spend that time finding obvious usability problems that could have been caught earlier, organisations waste both research capacity and participant goodwill.

Uxia’s position is that AI synthetic testers should be used to accelerate the early loop, while human research should be used to validate, explain, and de-risk the decisions that matter most. In one internal product discussion, Victor Perdiguer explains that Uxia does not position the product as a full substitute for UX research; instead, it is used to speed up work, reach hard-to-access users, introduce specific contexts, and complement human feedback when the stakes are high.

That hybrid model is especially powerful for modern product teams because it creates a better division of labour:

Use synthetic testers for:

Early feedback

Rapid UX validation

Repeated design iteration

Friction detection

Accessibility review

Scenario testing

Hard-to-recruit audience simulation

Preparing better human studies

Use human participants for:

Final validation

Emotional and motivational depth

Behaviour in real environments

High-stakes decisions

Discovery research

Edge cases

Trust-building with stakeholders

This is the practical future of UX research: not AI instead of humans, but AI before humans, around humans, and in support of better human research.

A better research workflow for product teams

The industry has already moved from “big research project” thinking to continuous discovery, product analytics, experimentation, and rapid iteration. But UX research tooling has not always kept up with that operating model.

Uxia’s approach gives teams a workflow that fits how products are actually built.

A product designer can test a prototype before sending it to stakeholders. A product manager can compare whether a flow supports a mission clearly enough. A growth team can detect conversion friction before launching an A/B test. An agency can give clients a stronger rationale for design decisions. An enterprise team can use synthetic testing to triage issues before investing in human studies.

This is where the SEO phrase “synthetic user testing” undersells the real value. The benefit is not just synthetic users. The benefit is research velocity.

We highlight this directly: AI testers can explore designs, surface UX frictions, and provide actionable insights without scheduling or coordination. The platform also includes AI Live Test, AI User Research, Accessibility Testing, and Human Insights as part of a broader AI-powered user testing suite.

That combination is important. A synthetic-only tool may help teams move quickly, but a hybrid UX research platform helps teams move quickly and responsibly.

The trust question: can synthetic user testing be reliable?

Every serious conversation about AI user research eventually reaches the same question:

How much can we trust the results?

Uxia’s answer is methodological, not magical. We validate synthetic outputs against a “golden data set” of successful human user tests, then back-testing product improvements against that benchmark. We also run comparisons between synthetic testers and regular human panels to understand where synthetic testers perform well, where they fail, and how models need to evolve.

That is the right way to frame AI in UX research.

Synthetic testers should not be treated as unquestionable truth. They should be treated as decision support. Their outputs need structure, validation, benchmarking, and human oversight.

Uxia also addresses a common concern: AI testers may behave like designers or technical reviewers instead of normal users. We use settings such as tech literacy to adjust how digitally proficient testers are, and applies analysis layers to separate user-like behaviour from UX/UI evaluation.

That distinction is critical. A synthetic tester should not simply critique an interface like a UX expert. It should simulate how a user with a defined profile, context, and mission might move through the product.

What this means for agencies, scaleups, and enterprises

The implications are different depending on the team.

For agencies, synthetic user testing can improve pre-sales, design validation, and client reporting. Instead of presenting a design as “our recommendation,” teams can support decisions with structured UX evidence.

For scaleups, Uxia can help product and design teams test more often without slowing down sprints. This is especially useful when teams do not have a dedicated research function or need quick feedback before committing engineering resources.

For enterprises, synthetic user testing can act as a scalable first layer of UX validation across many teams, markets, and flows. It can help researchers focus human studies on the highest-value questions instead of repeatedly catching the same avoidable usability issues.

From hunch-driven design to evidence-driven iteration

One reason the Lee & Lee conversation is so relevant is that UX has always fought against internal opinion masquerading as user insight.

Lee Duddell’s earlier work with WhatUsersDo was built on the idea that organisations should not rely on internal hunches when they can observe real user behaviour.

Uxia continues that same philosophy in a new context.

The problem is no longer only that teams make decisions based on hunches. The problem is that teams often know they should test, but the cost and time of testing makes it difficult to do continuously.

Synthetic user testing lowers that barrier.

It gives teams a way to ask better questions earlier:

Will users understand this screen?

Is the mission clear?

Where do they hesitate?

What do they expect to happen next?

Which parts of the flow create friction?

What accessibility issues are we missing?

Which insights deserve human validation?

That is not a replacement for research maturity. It is a way to operationalise it.

The future of UX research is not synthetic vs human

The most useful way to think about this shift is not “synthetic versus human.”

It is synthetic plus human.

Synthetic testers can help product teams learn faster. Human participants help teams understand deeper. Synthetic testing can remove obvious friction before a study. Human research can validate motivation, emotion, and real-world context. AI can scale structured feedback. Researchers can interpret meaning and decide what matters.

That is the future Uxia is building towards: a hybrid UX research workflow where product teams can validate designs in minutes, then bring humans in where their insight is most valuable.

As Borja and Victor’s appearance on Lee & Lee UX Podcast shows, this is no longer a theoretical discussion. It is becoming a practical question for every product team:

How do we make UX research fast enough to keep up with product development, without losing the human insight that makes research valuable?

At Uxia, the answer is clear.

Start with AI synthetic testers. Learn fast. Fix fast. Then use human insight where it matters most.

That is how modern teams can build better products with more confidence, less guesswork, and a research process that finally matches the speed of product development.

Listen to the full podcast here!